-

H3C UniServer R5300 G6: The Definitive 4U AI Server for Enterprise Workloads USD35,000

-

NVIDIA H100 80GB PCIe Tensor Core GPU

USD28,000Original price was: USD28,000.USD24,500Current price is: USD24,500. -

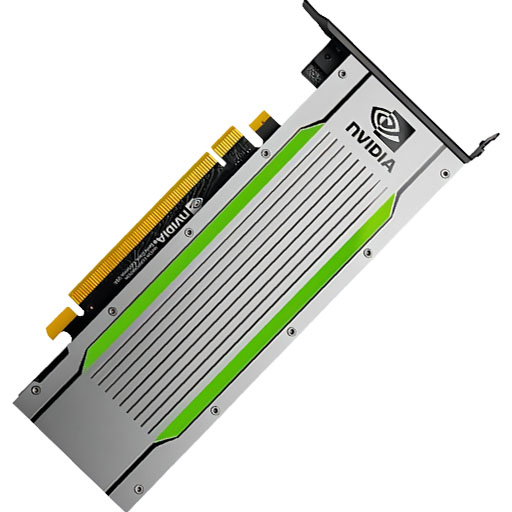

NVIDIA T4 Tensor Core GPU: The Smart Choice for AI Inference and Data Center Workloads USD950

-

Cisco B22HP Expansion Module - 16 Ports USD180

-

NVIDIA A100 40GB Tensor Core GPU: Complete Professional Guide

Rated 4.67 out of 5USD14,500Original price was: USD14,500.USD12,800Current price is: USD12,800. -

HGX H100 Optimized X13 8U 8GPU Server: The Ultimate AI and HPC Powerhouse for Exascale Computing USD300,000

Products Mentioned in This Article

NVIDIA Quantum-2 QM9700USD21,000

NVIDIA H100 NVL GPU

USD33,000Original price was: USD33,000.USD30,500Current price is: USD30,500.NVIDIA T4 Tensor Core GPU: The Smart Choice for AI Inference and Data Center WorkloadsUSD950

NVIDIA L40S

USD11,500Original price was: USD11,500.USD10,500Current price is: USD10,500.HGX H100 Optimized X13 8U 8GPU Server: The Ultimate AI and HPC Powerhouse for Exascale ComputingUSD300,000

How to Configure a Multi-GPU Training Server for Deep Learning

Author: ITCT Tech Editorial Unit

Reviewed By: ITCT Infrastructure & Solutions Team

Last Updated: January 12, 2026

Reading Time: 20 minutes

References:

- NVIDIA HGX & DGX Platform Architecture Docs

- PyTorch DistributedDataParallel (DDP) Documentation

- TensorFlow Distributed Training Guide

- Microsoft DeepSpeed (ZeRO) Optimization Whitepapers

- H3C S9855 Series Switch Specifications

Quick Answer: Summary

A multi-GPU training server is a specialized high-performance computing environment designed to parallelize deep learning workloads, reducing model training time from weeks to hours. By integrating 4 to 8+ enterprise-grade GPUs (such as NVIDIA H100 or H200) with high-bandwidth interconnects like NVLink, these systems handle the massive parameter counts of Large Language Models (LLMs) and computer vision systems. The architecture typically relies on either modular NVIDIA HGX platforms for customization or turnkey NVIDIA DGX systems for immediate deployment, supported by robust power (up to 10kW per rack) and cooling infrastructure.

Key Takeaways & Decision Guide

For organizations prioritizing flexibility and custom data center integration, HGX-based configurations are the superior choice, allowing for specific chassis and cooling customizations. Conversely, NVIDIA DGX systems are recommended for teams requiring rapid deployment with a validated software stack. Critical prerequisites include a power infrastructure capable of supporting 3-phase distribution, high-throughput storage (NVMe), and specialized networking (RoCE v2 or InfiniBand) to prevent communication bottlenecks between GPUs. Do not attempt large-scale distributed training without first validating facility cooling capacity (approx. 1.5kW cooling per 1kW server power).

Configuring a multi-GPU training server represents a critical investment for organizations pursuing advanced artificial intelligence and deep learning capabilities. As deep learning models grow increasingly complex, requiring billions of parameters and massive datasets, single-GPU configurations no longer suffice for efficient training workflows. Multi-GPU server architectures deliver the computational horsepower necessary to train large language models, computer vision systems, and other resource-intensive AI applications.

This comprehensive guide explores every aspect of multi-GPU training server configuration, from hardware component selection through software optimization. Whether you’re building an on-premise data center or upgrading existing infrastructure, understanding these fundamental principles ensures maximum return on investment and optimal training performance.

multi-GPU training server

Understanding Multi-GPU Server Architectures

What is a Multi-GPU Training Server?

A multi-GPU training server integrates multiple graphics processing units within a single system or cluster to parallelize deep learning computations. Unlike consumer workstations that typically support 1-2 GPUs, enterprise training servers accommodate 4-8 GPUs or more, connected through high-bandwidth interconnects.

The primary advantage lies in distributed data parallelism, where training batches are split across multiple GPUs, dramatically reducing training time from weeks to days or hours. Modern architectures like NVIDIA HGX platforms provide tightly-coupled GPU clusters with specialized interconnects designed specifically for AI workloads.

HGX vs DGX Platform Comparison

When selecting enterprise GPU infrastructure, understanding the distinction between NVIDIA HGX and DGX platforms is essential.

NVIDIA HGX represents a modular platform design that OEMs integrate into custom server configurations. The HGX baseboard contains 4 or 8 GPUs connected via NVLink, plus CPUs, memory, and networking components. This flexibility allows manufacturers to customize chassis design, cooling solutions, and additional features while maintaining the high-performance GPU cluster at the core.

NVIDIA DGX systems are turnkey AI supercomputers engineered and supported directly by NVIDIA. These fully-integrated solutions include optimized hardware, pre-installed software stacks, and enterprise support. The NVIDIA DGX H200 exemplifies this approach, delivering 8 H200 GPUs with 1,128 GB total GPU memory in a validated, production-ready configuration.

| Feature | NVIDIA HGX Platform | NVIDIA DGX Systems |

|---|---|---|

| Configuration | Modular baseboard integrated by OEMs | Turnkey complete system |

| Customization | High – OEMs customize chassis, cooling, features | Limited – standardized configuration |

| GPU Options | H100, H200, B200 configurations | H100, H200, B200, GB200 |

| Software Stack | Linux OS with customer-installed frameworks | DGX OS with pre-configured AI software |

| Support Model | OEM provides hardware support | NVIDIA enterprise support included |

| Price Point | Varies by OEM implementation | Premium pricing for integrated solution |

| Ideal Use Case | Custom data center integration | Rapid deployment with minimal setup |

Organizations requiring maximum flexibility often choose HGX-based servers, while those prioritizing rapid deployment and NVIDIA support opt for DGX platforms.

Hardware Component Selection

GPU Selection Criteria

Selecting appropriate GPUs represents the most critical hardware decision for deep learning servers. Modern AI accelerators vary dramatically in memory capacity, compute performance, interconnect bandwidth, and power requirements.

Memory Capacity: Deep learning models with billions of parameters require substantial GPU memory. The NVIDIA H200 provides 141GB HBM3e per GPU, allowing larger batch sizes and models compared to earlier generations. For transformer models and large language models, high memory capacity directly translates to training efficiency.

Compute Performance: Tensor cores specialized for matrix operations deliver the primary computational horsepower. H100 GPUs provide 4,000 TFLOPS of FP8 Tensor Core performance, while H200 maintains this performance with enhanced memory bandwidth.

Interconnect Technology: GPU-to-GPU communication bandwidth determines scaling efficiency. PCIe connections provide up to 128 GB/s bidirectional bandwidth with Gen 5, while NVLink delivers up to 900 GB/s aggregate bandwidth across multiple links, dramatically reducing communication bottlenecks during distributed training.

Power and Cooling: High-end GPUs like H100 SXM5 consume up to 700W per GPU. An 8-GPU configuration requires 5.6 kW for GPUs alone, plus CPU and system power. Data center planning must account for 10-15 kW total system power and corresponding cooling capacity.

Recommended GPU Configurations

| GPU Model | Memory | Peak Performance | Ideal Workload | Price Range |

|---|---|---|---|---|

| NVIDIA L40S | 48GB GDDR6 | 1,466 TFLOPS | Computer vision, inference | $10,500 |

| NVIDIA H100 NVL | 94GB HBM3 | 4,000 TFLOPS | Large model training | $30,500 |

| NVIDIA H200 | 141GB HBM3e | 4,000 TFLOPS | LLM training, inference | $31,000 |

| NVIDIA H100 SXM5 | 80GB HBM3 | 4,000 TFLOPS | Distributed training | Contact for pricing |

CPU and Motherboard Requirements

While GPUs provide the computational power, the CPU subsystem manages data orchestration, preprocessing, and system operations. Multi-GPU servers require CPUs with sufficient PCIe lanes, memory channels, and processing capability to avoid bottlenecking GPU operations.

PCIe Lane Requirements: Each GPU requires 16 PCIe lanes for optimal bandwidth. An 8-GPU configuration demands 128 PCIe lanes, necessitating dual-socket CPU configurations. Intel Xeon Scalable processors (Sapphire Rapids) and AMD EPYC processors (Genoa) provide the necessary PCIe Gen 5 connectivity.

Memory Configuration: System RAM should be 2-4x total GPU memory to accommodate data preprocessing, buffering, and system operations. For an 8x H200 configuration with 1,128 GB GPU memory, 2TB of DDR5 system RAM represents the minimum recommendation.

CPU Core Count: While deep learning training primarily utilizes GPUs, preprocessing operations benefit from higher core counts. Processors with 32-64 cores per socket provide adequate capacity for data augmentation, decompression, and multi-threaded operations.

Storage Subsystem Architecture

Deep learning training requires high-throughput storage to feed data to multiple GPUs without creating I/O bottlenecks. Modern NVMe storage delivers the necessary bandwidth for large-scale training datasets.

Local NVMe Storage: PCIe Gen 4/5 NVMe drives provide 7-14 GB/s sequential read bandwidth. Multiple drives in RAID 0 configuration can deliver 50+ GB/s aggregate throughput, sufficient for most training workloads. A typical configuration includes 4-8x 7.68TB enterprise NVMe drives.

Network-Attached Storage: Large organizations often centralize datasets on high-performance NAS systems accessed via RDMA-capable networks. This approach allows dataset sharing across multiple training servers while maintaining low latency access.

Storage Capacity Planning: Training datasets for computer vision and NLP applications can exceed 100TB. Allocate 30-50TB local storage per server for working datasets, with additional network storage for archival and dataset repositories.

Networking Infrastructure Configuration

High-Speed Interconnects for Multi-GPU Communication

Network architecture dramatically impacts multi-GPU training performance, particularly for distributed training across multiple nodes. Two primary networking technologies dominate the enterprise AI space: RDMA over Converged Ethernet (RoCE) and InfiniBand.

RoCE v2 Networking: Remote Direct Memory Access over Ethernet provides low-latency, high-bandwidth GPU-to-GPU communication using standard Ethernet infrastructure. Modern 400GbE switches support RoCE v2, enabling cost-effective scaling of AI infrastructure.

The H3C S9855 Series represents a purpose-built RoCEv2 switch designed for AI workloads. With 32 ports of 400GbE connectivity and hardware-accelerated Priority-based Flow Control (PFC), these switches deliver the consistent, low-latency performance required for efficient distributed training.

InfiniBand Architecture: InfiniBand provides the highest performance interconnect for AI clusters, with NDR InfiniBand delivering 400Gb/s per port and ultra-low latency. The NVIDIA Quantum-2 QM9790 offers 64 ports of 400Gb/s InfiniBand connectivity, enabling massive-scale AI deployments with optimal GPU utilization.

Network Topology Design

Single-Server Configuration: Servers with 8 or fewer GPUs typically rely on NVLink for intra-server GPU communication, requiring only standard Ethernet for management and inter-server communication. A dual 100GbE connection provides adequate bandwidth for parameter synchronization in data-parallel training.

Multi-Server Clusters: Training clusters with 16-128 GPUs benefit from dedicated high-speed fabric. Fat-tree or rail-optimized topologies ensure non-blocking communication paths between any GPU pair. Each server connects to the fabric with 2-8x 200/400GbE or InfiniBand links.

Spine-Leaf Architecture: Large-scale deployments (256+ GPUs) implement spine-leaf topologies with oversubscription ratios of 1:1 to 2:1. This architecture provides predictable latency and bandwidth regardless of source-destination GPU pairs.

| Network Technology | Bandwidth per Port | Latency | Typical Use Case | Cost Factor |

|---|---|---|---|---|

| 100GbE Ethernet | 100 Gb/s | 5-10 microseconds | Management, small clusters | 1x |

| 200GbE RoCE v2 | 200 Gb/s | 2-5 microseconds | Medium AI clusters | 2x |

| 400GbE RoCE v2 | 400 Gb/s | 2-5 microseconds | Large AI clusters | 3x |

| 400Gb InfiniBand | 400 Gb/s | 1-2 microseconds | Premium AI infrastructure | 4x |

Network Adapter Configuration

Each training server requires multiple network interfaces for different functions:

Management Network: 1GbE or 10GbE connection for remote management, monitoring, and administrative access.

Storage Network: 25-100GbE connections for accessing centralized storage systems, separate from training fabric to avoid congestion.

Training Fabric: 2-8x 200/400GbE or InfiniBand NICs connected to the high-speed AI network fabric. NVIDIA ConnectX-7 adapters provide optimal performance for distributed training workloads.

Power and Cooling Infrastructure

Power Requirements and Distribution

Enterprise GPU servers demand substantial electrical infrastructure. Proper power planning prevents unexpected capacity constraints as training clusters scale.

Per-Server Power Consumption:

- 8x H100 SXM5 GPUs: 5,600W

- Dual CPUs: 700-1,000W

- System components (memory, storage, fans): 500-800W

- Total system power: 7,000-8,000W typical, 10,000W maximum

Power Distribution Strategy: High-density GPU servers require 3-phase 208/240V power distribution. Each server typically connects with 2-4x C19 power connectors, enabling redundant power supplies for reliability.

Rack-Level Planning: A standard 42U rack can accommodate 3-4 high-density GPU servers, consuming 30-40 kW total power. Data centers must provide adequate circuit capacity and PDU infrastructure to support this density.

Cooling System Design

Heat dissipation represents a critical challenge for multi-GPU configurations. Modern data center cooling approaches include air cooling, liquid cooling, and hybrid solutions.

Air Cooling Requirements: Traditional air-cooled GPU servers require substantial airflow. Front-to-back cooling designs demand cold aisle temperatures of 18-22 C and hot aisle exhaust reaching 40-50 C. High-CFM server fans generate significant acoustic noise, requiring isolated machine rooms.

Direct Liquid Cooling: Cold plates mounted directly on GPUs and CPUs remove heat more efficiently than air cooling. Liquid cooling reduces facility power consumption by 30-40% by eliminating energy-intensive CRAC units. NVIDIA HGX platforms offer both air-cooled and liquid-cooled variants.

Rear-Door Heat Exchangers: Hybrid solutions use rear-door water-cooled heat exchangers to capture heat from air-cooled servers. This approach provides cooling benefits without requiring liquid plumbing to individual servers.

Cooling Capacity Planning: Budget 1.3-1.5 kW of cooling capacity per 1 kW of server power consumption when accounting for facility losses. A rack of 4x 8-GPU servers requires 40-50 kW total cooling capacity.

NVIDIA DGX H200 (AI Supercomputer – 8× H200 SXM5 GPUs, 2× Intel Xeon 64C, 2TB DDR5, 30TB NVMe)

| Component | Specification | Details |

|---|---|---|

| GPU | 8× NVIDIA H200 SXM5 | Hopper architecture with Tensor Cores |

| GPU Memory (Each) | 141GB HBM3e | 4.8TB/s bandwidth per GPU |

| Total GPU Memory | 1,128GB (1.1TB) | Aggregate across all 8 GPUs |

| GPU Interconnect | NVLink 4.0 + NVSwitch | 900GB/s bidirectional per GPU |

| Total NVLink Bandwidth | 7.2TB/s | All-to-all non-blocking fabric |

| FP8 Performance | 32 petaFLOPS | Peak Tensor Core throughput |

| FP16 Performance | 16 petaFLOPS | Mixed precision training |

| TF32 Performance | 8 petaFLOPS | TensorFlow 32-bit float |

| CPU | 2× Intel Xeon Platinum 8592+ | 64 cores each (128 total) |

| CPU Cores/Threads | 128 cores / 256 threads | 2.0GHz base, up to 3.8GHz boost |

Software Stack Installation

Operating System Selection and Configuration

Linux distributions dominate the deep learning server landscape due to superior driver support, performance, and ecosystem integration. Ubuntu Server LTS and Red Hat Enterprise Linux represent the most common choices.

Ubuntu Server 22.04 LTS: Provides excellent out-of-box hardware support and straightforward CUDA installation. Ubuntu’s extensive package repositories and community support make it ideal for research environments and startups.

Red Hat Enterprise Linux 9: Offers enterprise-grade stability, security, and support for production AI infrastructure. RHEL’s subscription model includes certified hardware support and long-term maintenance.

Operating System Configuration Best Practices:

- Disable desktop environments to minimize resource consumption

- Configure automatic security updates for system stability

- Enable persistent logging for troubleshooting distributed training issues

- Set up remote management via SSH with key-based authentication

- Configure network time synchronization for distributed training coordination

CUDA and GPU Driver Installation

NVIDIA CUDA toolkit and GPU drivers form the foundation of the deep learning software stack. Proper installation ensures optimal GPU performance and compatibility with deep learning frameworks.

Driver Installation Process:

- Add NVIDIA package repositories to system sources

- Remove existing NVIDIA drivers to prevent conflicts

- Install CUDA toolkit (includes compatible driver version)

- Verify installation with nvidia-smi command

- Configure persistence daemon for GPU initialization

CUDA Version Selection: Match CUDA version to deep learning framework requirements. PyTorch 2.1 requires CUDA 11.8 or 12.1, while TensorFlow 2.15 supports CUDA 11.8. Installing multiple CUDA versions enables framework flexibility.

Driver Persistence: Enable nvidia-persistenced to maintain GPU initialization state, reducing latency for distributed training job launches.

Deep Learning Framework Installation

PyTorch and TensorFlow represent the dominant frameworks for deep learning development. Both support multi-GPU training with relatively straightforward configuration.

PyTorch Installation and Configuration:

pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu121

PyTorch’s DistributedDataParallel (DDP) module provides efficient multi-GPU training with minimal code changes. DDP automatically handles gradient synchronization and parameter updates across GPUs.

TensorFlow Installation and Configuration:

pip install tensorflow[and-cuda]

TensorFlow’s MirroredStrategy enables synchronous multi-GPU training on single nodes, while MultiWorkerMirroredStrategy supports distributed training across multiple servers.

Container-Based Deployment: NVIDIA NGC containers provide pre-configured environments with optimized framework builds, CUDA libraries, and performance tuning. Docker or Singularity containers ensure consistent environments across development and production systems.

Container Orchestration with Kubernetes

Production AI infrastructure increasingly relies on Kubernetes for workload orchestration, resource allocation, and cluster management. The NVIDIA GPU Operator simplifies GPU support in Kubernetes clusters.

GPU Operator Installation: The GPU Operator automatically installs and configures NVIDIA drivers, container runtime, and device plugins across Kubernetes nodes. This approach eliminates manual driver installation on each node.

Resource Scheduling: Kubernetes GPU scheduling allows fine-grained resource allocation. Jobs can request specific GPU counts, memory requirements, and GPU types, enabling efficient cluster utilization.

Multi-Tenancy Support: Kubernetes namespaces and resource quotas enable multiple teams to share GPU infrastructure safely, with isolation and fair resource allocation.

Multi-GPU Training Configuration

Data Parallelism Strategies

Data parallelism represents the most common approach to multi-GPU training, distributing mini-batches across GPUs while maintaining synchronized model parameters.

Synchronous Data Parallelism: All GPUs process different data batches simultaneously, then synchronize gradients before parameter updates. This approach maintains training determinism but requires careful batch size tuning to maintain convergence characteristics.

Gradient Accumulation: When memory constraints prevent large batch sizes, gradient accumulation simulates larger batches by accumulating gradients over multiple forward-backward passes before parameter updates.

Mixed Precision Training: FP16 or BF16 mixed precision reduces memory consumption and accelerates training on modern GPUs with tensor cores. Automatic mixed precision (AMP) libraries handle precision conversion automatically.

Distributed Training Configuration

Distributed training extends multi-GPU approaches across multiple servers, enabling training of extremely large models and datasets.

PyTorch Distributed Launch:

PyTorch provides torch.distributed.launch for spawning distributed training processes across multiple GPUs and nodes. Configuration requires specifying master node address, world size (total GPU count), and local rank (GPU index per node).

TensorFlow MultiWorkerMirroredStrategy:

TensorFlow’s strategy automatically distributes computation across workers when environment variables specify cluster configuration. The framework handles synchronization and fault tolerance transparently.

Horovod Framework: Horovod provides a framework-agnostic distributed training library supporting PyTorch, TensorFlow, and other frameworks. Ring-allreduce communication patterns optimize gradient synchronization efficiency.

Optimization Techniques for Multi-GPU Scaling

Achieving linear scaling across multiple GPUs requires careful optimization of communication patterns, batch sizes, and learning rate schedules.

Gradient Compression: Reducing gradient precision or applying sparsification techniques decreases communication volume, improving scaling efficiency on bandwidth-constrained networks.

Pipeline Parallelism: For extremely large models exceeding single-GPU memory, pipeline parallelism partitions models across GPUs, processing different micro-batches simultaneously through pipeline stages.

Zero Redundancy Optimizer (ZeRO): ZeRO partitions optimizer states, gradients, and parameters across GPUs, dramatically reducing per-GPU memory consumption for large model training. Microsoft DeepSpeed implements ZeRO with minimal code changes.

Communication-Computation Overlap: Overlapping gradient communication with backward computation hides communication latency, improving GPU utilization during distributed training.

Performance Monitoring and Optimization

GPU Utilization Monitoring

Comprehensive monitoring ensures training jobs utilize available resources efficiently, identifying bottlenecks and optimization opportunities.

nvidia-smi Monitoring: The nvidia-smi utility provides real-time GPU metrics including utilization, memory consumption, temperature, and power draw. Automated monitoring scripts can log metrics for historical analysis.

DCGM (Data Center GPU Manager): DCGM provides enterprise-grade GPU monitoring, health checking, and telemetry collection. Integration with Prometheus and Grafana enables visualization of GPU metrics across entire clusters.

Framework Profilers: PyTorch Profiler and TensorFlow Profiler identify computational hotspots, memory bottlenecks, and data loading inefficiencies within training code.

Bottleneck Identification and Resolution

Common bottlenecks in multi-GPU training include data loading, communication overhead, and unbalanced computation.

Data Loading Bottlenecks: Insufficient data loading parallelism causes GPUs to idle waiting for batches. Increasing dataloader worker count and implementing data prefetching often resolves this issue. NVIDIA DALI provides GPU-accelerated data loading for computer vision workloads.

Communication Overhead: Excessive gradient synchronization frequency relative to computation time reduces scaling efficiency. Increasing batch size or implementing gradient accumulation improves compute-to-communication ratios.

Memory Limitations: Out-of-memory errors prevent utilizing optimal batch sizes. Gradient checkpointing, mixed precision training, and model parallelism techniques enable training larger models within memory constraints.

Benchmark Testing

Establishing baseline performance metrics guides optimization efforts and validates infrastructure configuration.

Synthetic Benchmarks: ResNet-50 image classification provides a standardized benchmark for comparing multi-GPU performance across different configurations. Recording images per second and scaling efficiency helps validate hardware setup.

Real Workload Testing: Benchmark actual training workloads to identify framework-specific bottlenecks and optimization opportunities. Measure epochs per day or time to target accuracy as practical performance metrics.

Scaling Efficiency Analysis: Compare training throughput (samples per second) when scaling from 1 to 2, 4, and 8 GPUs. Ideal linear scaling means 8 GPUs provide 8x single-GPU throughput. Deviations indicate communication or synchronization bottlenecks requiring investigation.

Security and Access Control

Network Security Configuration

Multi-GPU training servers process sensitive datasets and proprietary models, requiring robust security controls.

Firewall Configuration: Restrict inbound connections to essential services (SSH, monitoring, cluster communication). Block unnecessary ports and implement rate limiting on management interfaces.

Virtual Private Networks: VPN access provides secure remote connectivity for researchers and administrators. WireGuard or OpenVPN offer performance and security for remote training job submission.

Network Segmentation: Isolate training infrastructure on dedicated VLANs separate from corporate networks. Implement firewall rules controlling traffic between segments based on least privilege principles.

User Authentication and Authorization

Multi-user environments require robust authentication and authorization systems preventing unauthorized access and ensuring audit trails.

SSH Key Management: Disable password authentication in favor of SSH public key authentication. Implement centralized key management for user onboarding and offboarding.

LDAP/Active Directory Integration: Integrate Linux authentication with corporate directory services for centralized user management. Groups-based authorization simplifies permission management for shared resources.

Sudo Access Controls: Limit sudo privileges to authorized administrators. Implement sudo logging for audit compliance and security incident investigation.

Data Protection and Privacy

Training datasets often contain sensitive or proprietary information requiring protection against unauthorized access and data breaches.

Encryption at Rest: Encrypt storage volumes containing training datasets using LUKS or similar technologies. Hardware-accelerated AES encryption minimizes performance impact.

Encryption in Transit: TLS/SSL encryption protects data transfers between training servers and storage systems. IPsec tunnels secure inter-node communication in distributed training clusters.

Access Logging and Auditing: Comprehensive logging of data access, training job submissions, and system changes enables security monitoring and compliance reporting.

Cost Optimization Strategies

Hardware Cost Analysis

Multi-GPU server infrastructure represents significant capital investment. Optimizing cost-per-training-hour requires balancing purchase price, performance, and operational expenses.

| Configuration | Hardware Cost | Performance (TFLOPS) | Cost per TFLOP | Power Draw |

|---|---|---|---|---|

| 4x L40S | $42,000 | 5,864 | $7.16 | 1,400W |

| 4x H100 NVL | $122,000 | 16,000 | $7.63 | 1,800W |

| 8x H100 NVL | $244,000 | 32,000 | $7.63 | 3,600W |

| 8x H200 HGX | $330,000 | 32,000 | $10.31 | 4,000W |

Cloud vs On-Premise TCO Comparison

Organizations must evaluate total cost of ownership comparing on-premise GPU infrastructure against cloud GPU instances.

On-Premise Advantages:

- Lower per-hour cost after depreciation period (typically 3 years)

- No data egress charges for large dataset transfers

- Complete control over hardware, software, and security

- Consistent availability without spot instance interruptions

Cloud GPU Advantages:

- No upfront capital investment

- Rapid scaling for temporary high-demand periods

- Access to latest GPU architectures without hardware refresh

- Reduced operational overhead for small teams

Break-Even Analysis: For continuous training workloads, on-premise infrastructure typically achieves cost parity with cloud instances within 12-18 months. Intermittent workloads favor cloud rental, while sustained training benefits from on-premise investment.

Operational Cost Optimization

Beyond hardware acquisition, operational expenses including power, cooling, and maintenance significantly impact TCO.

Power Efficiency: Modern GPUs with improved TOPS-per-watt reduce electricity costs. A data center electricity rate of $0.10/kWh translates to $8,760 annual power cost per kilowatt. An 8-GPU server consuming 8kW costs $70,080 annually in electricity alone.

Workload Consolidation: Higher GPU utilization spreads infrastructure costs across more training jobs. Kubernetes-based job scheduling maximizes utilization by packing multiple jobs onto available resources.

Resource Right-Sizing: Not all workloads require top-tier GPUs. Using L40S for inference and smaller model training reserves premium H100/H200 capacity for large model training where their capabilities are essential.

Troubleshooting Common Issues

GPU Detection and Driver Problems

Symptom: nvidia-smi shows no devices or incorrect GPU count

Solutions:

- Verify GPU physical installation and power connections

- Check BIOS settings enable PCIe slots and adequate PCIe lane allocation

- Reinstall NVIDIA drivers ensuring compatibility with kernel version

- Confirm secure boot settings don’t block driver loading

Multi-GPU Training Performance Issues

Symptom: Training speed does not scale linearly with GPU count

Solutions:

- Monitor GPU utilization to identify idle GPUs indicating data loading bottlenecks

- Increase dataloader worker count and implement prefetching

- Analyze communication overhead with profiling tools

- Reduce gradient synchronization frequency through gradient accumulation

- Verify network bandwidth between nodes in distributed training

Out of Memory Errors

Symptom: CUDA out of memory errors during training

Solutions:

- Enable mixed precision training to reduce memory consumption

- Implement gradient checkpointing for large models

- Reduce batch size and compensate with gradient accumulation

- Apply model parallelism for models exceeding single-GPU memory

- Clear Python cache and unnecessary tensors during training loops

Network Configuration Issues

Symptom: Distributed training jobs fail to communicate between nodes

Solutions:

- Verify firewall rules allow traffic on required ports

- Confirm network interfaces are properly configured with correct IP addresses

- Test connectivity with ping and network bandwidth measurement tools

- Check environment variables specify correct master node address

- Validate NCCL library configuration for multi-node communication

Frequently Asked Questions

Q: What is the minimum number of GPUs needed for deep learning training?

A: A single GPU suffices for learning and small-scale projects. Professional deep learning development typically begins with 2-4 GPUs. Large model training requiring distributed approaches benefits from 8+ GPUs. The optimal count depends on model size, dataset size, and time-to-solution requirements.

Q: Is NVLink required for multi-GPU training?

A: NVLink is not strictly required but significantly improves performance for models requiring frequent GPU-to-GPU communication. PCIe Gen 4 provides 64 GB/s bidirectional bandwidth, adequate for many training scenarios. NVLink’s 900 GB/s aggregate bandwidth becomes essential for large model training where communication overhead would otherwise limit scaling efficiency. Consumer GPUs typically lack NVLink, while enterprise HGX platforms include NVLink connectivity by default.

Q: How much system RAM is needed for a multi-GPU training server?

A: Allocate 2-4x total GPU memory as system RAM. An 8x H100 system with 640GB GPU memory should include 1.5-2TB system RAM. Sufficient system memory enables efficient data preprocessing, caching, and prevents CPU memory bottlenecks during data loading operations.

Q: What cooling solution is best for multi-GPU servers?

A: Air cooling suffices for small deployments (1-2 servers) with adequate data center cooling infrastructure. Liquid cooling becomes cost-effective for larger deployments (10+ servers) by reducing facility cooling costs 30-40%. Direct liquid cooling provides the highest density support, enabling 8-GPU configurations in compact chassis. Consider facility constraints, total deployment size, and TCO when selecting cooling approaches.

Q: Can I mix different GPU models in the same training server?

A: While technically possible, mixing GPU models complicates training configuration and limits performance. Different memory capacities, compute performance, and interconnect capabilities create imbalanced workload distribution. Homogeneous GPU configurations provide optimal performance and simplify troubleshooting. Reserve mixed GPU configurations for inference serving where different models have varying resource requirements.

Q: How do I choose between PyTorch and TensorFlow for multi-GPU training?

A: Both frameworks provide excellent multi-GPU support with minimal performance differences. PyTorch offers more flexible distributed training APIs and dominates academic research. TensorFlow provides superior production deployment tools and integration with TensorFlow Serving. Choose based on team expertise, existing codebase, and deployment requirements rather than multi-GPU capabilities.

Q: What network bandwidth is required for distributed training?

A: Single-server multi-GPU training relies primarily on NVLink, requiring standard Ethernet only for management. Distributed training across multiple servers benefits from 100GbE minimum, with 200-400GbE providing optimal performance for large-scale deployments. High-speed networking solutions from vendors like H3C and NVIDIA enable efficient gradient synchronization across hundreds of GPUs.

Q: How long does it take to set up a multi-GPU training server?

A: Initial hardware assembly and OS installation typically requires 4-8 hours. CUDA driver installation, framework configuration, and validation testing adds another 4-6 hours. Experienced teams can deploy production-ready multi-GPU servers in 1-2 days. Turnkey DGX systems reduce setup time to hours by arriving pre-configured with optimized software stacks.

Q: What is the typical lifespan of GPU training infrastructure?

A: Hardware depreciation typically assumes 3-year useful life for financial planning. Physically, well-maintained GPUs function 5-7 years. However, rapid AI advancement means older GPUs become computationally obsolete before physical failure. Budget for hardware refresh cycles every 3-4 years to maintain competitive training performance as model complexity increases.

Q: Do I need InfiniBand or is Ethernet sufficient?

A: Ethernet with RDMA (RoCE v2) provides adequate performance for most training scenarios at lower cost than InfiniBand. 200/400GbE Ethernet approaches InfiniBand bandwidth with slightly higher latency. InfiniBand remains the premium choice for cutting-edge performance in clusters exceeding 64 GPUs. NVIDIA Quantum-2 InfiniBand switches deliver maximum performance for organizations requiring absolute minimum training time.

Conclusion

Configuring a multi-GPU training server for deep learning requires careful consideration of hardware selection, networking infrastructure, software optimization, and operational management. From selecting appropriate GPU configurations through implementing distributed training frameworks, each decision impacts training performance and total cost of ownership.

Organizations investing in multi-GPU infrastructure should focus on balanced system design, ensuring no single component bottlenecks overall performance. Enterprise platforms like NVIDIA HGX and DGX systems provide validated configurations eliminating compatibility concerns and accelerating time-to-productivity.

As deep learning models continue growing in complexity and scale, properly configured multi-GPU training infrastructure becomes increasingly critical for organizations pursuing AI innovation. Following the principles outlined in this guide ensures your investment delivers maximum return through optimized performance, reliability, and scalability.

For enterprise GPU servers, networking solutions, and expert guidance on AI infrastructure deployment, visit ITCT Shop for comprehensive hardware solutions tailored to deep learning workloads.

Related Resources:

- NVIDIA HGX Platform Guide: H100 vs H200 vs B200 for GPU Clusters

- NVIDIA DGX Comparison Guide

- Complete NVIDIA GPU Buying Guide for AI & Data Centers

«When planning power budgets for multi-GPU clusters, looking at the thermal design power (TDP) of the GPU alone is a common mistake. In production environments, we consistently see that the auxiliary components—cooling fans, system memory, and storage fabric—add 20% to 30% overhead to the total rack density requirements.» — Lead Data Center Architect

«The choice between InfiniBand and RoCE v2 often comes down to existing infrastructure rather than raw performance. While InfiniBand offers slightly lower latency for clusters exceeding 64 GPUs, modern 400GbE RoCE implementations deliver nearly identical throughput for 8-to-16 node clusters at a significantly lower total cost of ownership.» — Senior Network Engineering Lead

«Hardware is only half the equation. Without properly configuring the software stack—specifically GPU driver persistence and pinning memory in PyTorch or TensorFlow—you can lose up to 15% of your theoretical compute performance due to unnecessary overhead during the data loading phase.» — Principal AI Systems Engineer

Last update at December 2025