-

NVIDIA DGX Spark: The Grace Blackwell AI Supercomputer on Your Desk

Rated 5.00 out of 5USD5,200 -

NVIDIA RTX A5000

Rated 4.67 out of 5USD9,500 -

NVIDIA T4 Tensor Core GPU: The Smart Choice for AI Inference and Data Center Workloads USD950

-

NVIDIA L40S

USD11,500Original price was: USD11,500.USD10,500Current price is: USD10,500. -

15.36TB SSD NVMe Palm Disk Unit (7") USD5,300

-

AI Bridge TE1-04 (4 Channel AI Device) USD1,700

High-Performance Storage for Generative AI: Solving the I/O Bottleneck

By ITCT Technical Team | January 22, 2026

What are the best AI Storage Solutions for Generative AI training?

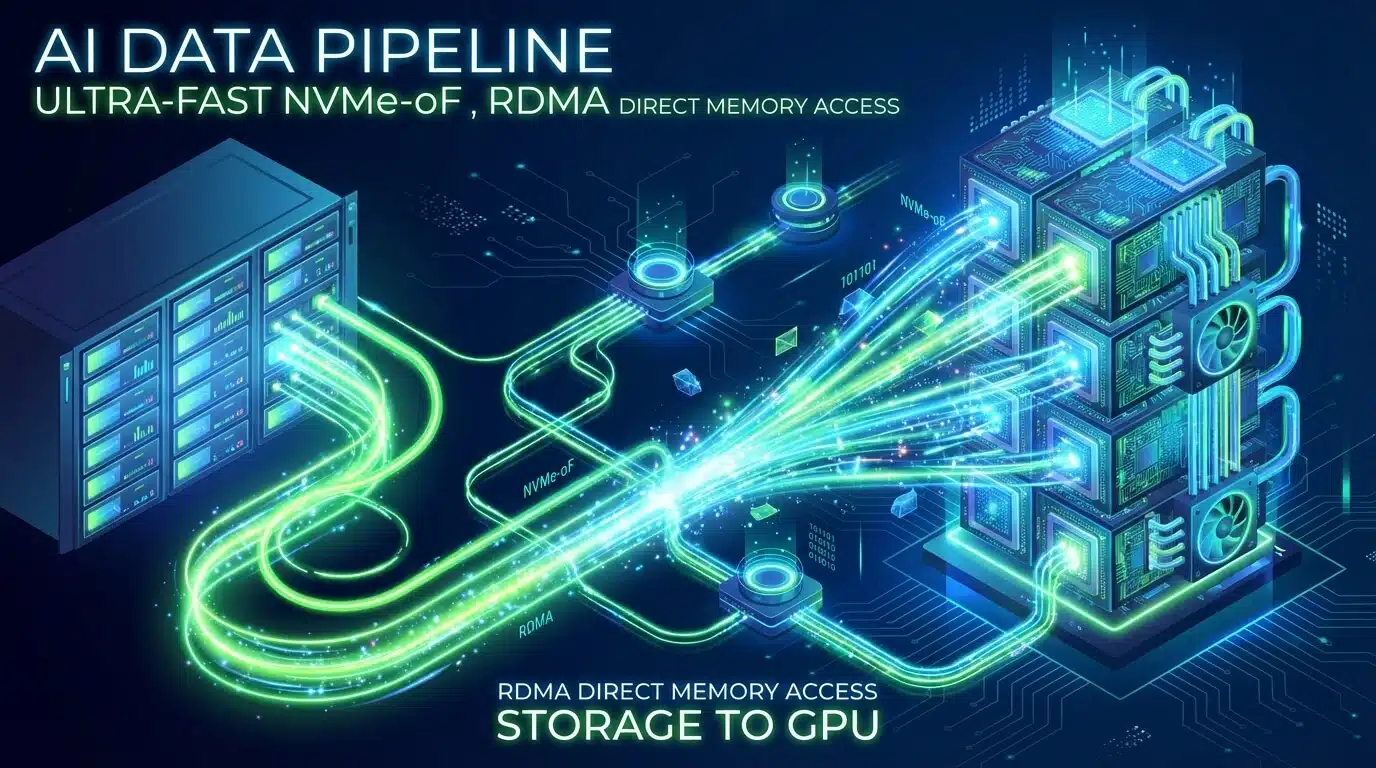

For Generative AI workloads involving large-scale training (LLMs) or high-throughput inference, traditional NAS solutions are insufficient due to I/O bottlenecks. The industry standard in 2026 relies on Parallel File Systems (like WekaFS or IBM Spectrum Scale) combined with NVMe-over-Fabrics (NVMe-oF) networking. This architecture allows multiple storage nodes to serve data simultaneously to GPU clusters, utilizing RDMA technology to bypass the CPU and write directly to memory, ensuring that high-performance GPUs like the NVIDIA H100 are never starved of data.

Key Decision Factors: AI Storage Solutions

When choosing a solution, prioritize Throughput (Read/Write GB/s) for training phases and IOPS (Input/Output Operations Per Second) for checkpointing. For clusters smaller than 8 GPUs, local NVMe RAID inside the server is often sufficient and cost-effective. However, for multi-node clusters (16+ GPUs), a centralized All-Flash Array with RDMA support over 400GbE or InfiniBand is mandatory to maintain training efficiency and justify the high cost of compute hardware.

In the era of trillion-parameter models, the spotlight often falls on the compute layer—specifically, the scarcity and power of GPUs like the NVIDIA H100 and the newer Blackwell architecture. However, seasoned AI architects know a darker truth: a GPU is only as fast as the data fed to it. As we move deeper into 2026, the primary bottleneck in Generative AI (GenAI) training and inference has shifted from compute availability to Input/Output (I/O) latency.

AI Storage Solutions

When an 8-GPU cluster sits idle waiting for training data to load, you aren’t just losing time; you are burning budget at an alarming rate. This article explores the critical storage architectures required to saturate modern GPU clusters, comparing parallel file systems and dissecting the NVMe-over-Fabrics revolution.

The Cost of Latency: Why Legacy Storage Fails GenAI

Traditional Network Attached Storage (NAS) architectures, which rely on standard TCP/IP and POSIX metadata handling, crumble under the weight of GenAI workloads. Large Language Models (LLMs) and Diffusion models require massive throughput for sequential reads (during training) and ultra-low latency for random reads (during checkpointing and inference).

If your storage system cannot deliver data at the speed of the GPU’s memory bandwidth, you experience “GPU Starvation.” To prevent this, modern infrastructure must pivot towards solutions designed for high-concurrency and massive throughput.

Technical Insight: An NVIDIA HGX H100 system can ingest data at speeds exceeding 400 GB/s. A standard enterprise NAS typically tops out at 2-5 GB/s. The gap is where performance dies.

Parallel File Systems: GPFS vs. Weka for Large-Scale AI Training

To handle petabytes of unstructured data (images, text, video), standard file systems are insufficient. This has led to the dominance of Parallel File Systems, which allow data to be striped across hundreds of storage nodes and accessed simultaneously. Two heavyweights in this arena are IBM Spectrum Scale (formerly GPFS) and WekaIO (Weka).

IBM Spectrum Scale (GPFS)

GPFS has been the gold standard for High-Performance Computing (HPC) for decades.

- Pros: Incredible stability, proven track record in massive supercomputing clusters, and distinct separation of data and metadata, which prevents bottlenecks during file lookups.

- Cons: Can be complex to deploy and tune. It often requires specific hardware configurations to maximize performance.

- Best For: Traditional HPC workloads, scientific simulation data, and hybrid cloud environments where legacy compatibility is key.

Weka (WekaFS)

Weka has emerged as a “storage for AI” native solution. It bypasses the traditional kernel to communicate directly with the NVMe drives.

- Pros: Zero-copy architecture, exceptional handling of small file I/O (critical for vision datasets), and the ability to saturate network links fully using custom protocols over UDP.

- Cons: Licensing costs can be significant for smaller deployments.

- Best For: Dedicated GenAI training clusters, especially those using NVIDIA HGX systems where maximum GPU utilization is the only metric that matters.

| Feature | GPFS (Spectrum Scale) | Weka (WekaFS) | Standard NAS |

|---|---|---|---|

| Architecture | Distributed Metadata | Distributed/Sharded Metadata | Centralized Metadata |

| Protocol Support | POSIX, NFS, SMB, S3 | POSIX, NFS, SMB, S3, GPU Direct | NFS, SMB |

| Small File Perf. | Good | Excellent | Poor |

| Setup Complexity | High | Moderate | Low |

NVMe-over-Fabrics (NVMe-oF): Reducing Latency in Data Pipelines

While the file system manages how data is organized, the transport protocol dictates how fast it moves. This is where NVMe-over-Fabrics (NVMe-oF) changes the game.

Unlike iSCSI or standard NFS, which encapsulate storage commands in heavy TCP/IP stacks, NVMe-oF extends the efficiency of local NVMe SSDs across the network.

RoCE v2 and RDMA

The secret sauce of high-performance AI storage is Remote Direct Memory Access (RDMA). Using protocols like RoCE v2 (RDMA over Converged Ethernet), storage servers can write data directly into the memory of the GPU server, bypassing the CPU entirely.

- Lower Latency: Reducing hop counts and CPU interrupts drops latency from milliseconds to microseconds.

- CPU Offloading: Your CPUs (like the Intel Xeons or AMD EPYCs in our Xfusion MGX Servers) are free to manage orchestration rather than moving data packets.

For environments building custom storage arrays, utilizing high-density drives like the 15.36TB Enterprise NVMe SSD over an NVMe-oF fabric ensures that your capacity tier is as fast as your caching tier.

Scalable AI Storage Solutions for Petabyte-Scale AI Datasets

As models grow from billions to trillions of parameters, datasets are expanding into the petabyte range. Storing this data strictly on high-performance flash is expensive, but retrieving it from cold storage (tape/HDD) is too slow for active training.

The Tiering Strategy

A robust AI infrastructure strategy involves intelligent tiering:

- Hot Tier (NVMe): Holds the current epoch’s training data. This requires All-Flash Arrays like the Huawei OceanStor Dorado 6000, which offers sub-millisecond latency and active-active controller architecture for zero downtime.

- Warm Tier (QLC Flash/HDD): Holds the full dataset. Data is prefetched from here to the Hot Tier.

- Cold Tier (Object Storage): Archival data and older model checkpoints.

Hardware Considerations for 2026

- PCIe Gen 5.0 & 6.0: Ensure your storage backplanes support the latest PCIe standards to match the bandwidth of the H100/H200 GPUs.

- Networking: Storage nodes should be connected via 400GbE or NDR InfiniBand (800Gb/s) to prevent the network from becoming the new bottleneck.

Why Sourcing Locally in Dubai Matters

Deploying this level of infrastructure requires more than just buying parts; it requires supply chain reliability. For enterprises operating in the Middle East and Dubai, data sovereignty and rapid hardware replacement are critical.

At ITCTShop, located in the heart of Dubai’s tech hub, we specialize in high-availability supply chains for AI hardware. Whether you need immediate delivery of AI Storage Solutions to expand an existing cluster or are architecting a new data center from the ground up, our local presence ensures you bypass the long lead times typical of global distributors.

Conclusion: AI Storage Solutions

The battle for AI supremacy is won in the data pipeline. By implementing Parallel File Systems like Weka or GPFS, leveraging NVMe-oF for near-local latency, and selecting the right hardware foundation, organizations can unlock the full potential of their GPU investments. Don’t let your million-dollar compute cluster wait on a ten-cent disk read.

For consultation on architecting your AI storage layer, explore our full range of HGX Servers and storage arrays at ITCTShop.com.

“In 2026, we stopped asking ‘how much capacity do we need?’ and started asking ‘how fast can we feed the Tensor Cores?’ If your storage latency exceeds 2 milliseconds, your H100s are essentially taking a nap 30% of the time.” — Head of AI Infrastructure

“Many engineers overlook metadata performance. When training on millions of small image files, the bottleneck isn’t usually bandwidth; it’s the file system’s ability to handle metadata lookups. This is where parallel file systems drastically outperform standard enterprise storage.” — Senior Solutions Architect, HPC

“For most on-premise deployments in the Middle East, we recommend a hybrid approach: massive spinning disk (HDD) lakes for cold archival, but a dedicated, blistering fast NVMe-oF tier that holds only the active training set. It optimizes cost without sacrificing training speed.” — Lead Data Center Engineer

Last update at December 2025