-

AI Bridge LTE TS2-08: The Ultimate 8/16-Channel Edge AI Analytics Powerhouse with LTE & GPS USD4,500

-

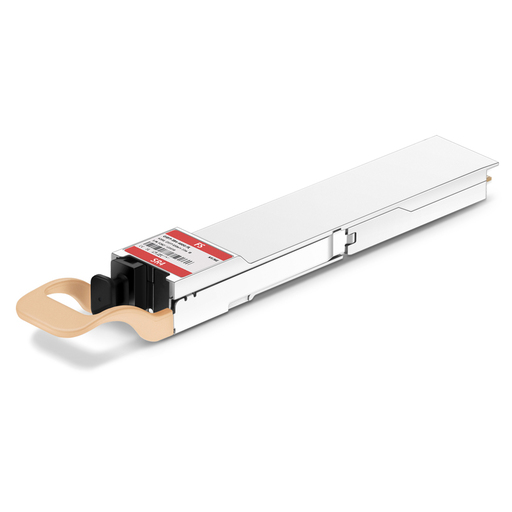

NVIDIA/Mellanox MMA4Z00-NS400 Compatible 400GBASE-SR4 OSFP Flat Top PAM4 850nm 50m DOM MPO-12/APC MMF InfiniBand NDR Optical Transceiver Module for ConnectX-7 HCA USD1,665

-

AI Bridge TE1-04 (4 Channel AI Device) USD1,700

-

NVIDIA RTX A5000

Rated 4.67 out of 5USD9,500 -

NVIDIA A100 80GB Tensor Core GPU USD15,000

-

NVIDIA RTX A5500 Professional Workstation Graphics Card: Ultimate Performance for Creative Professionals USD3,100

Products Mentioned in This Article

Linux vs Windows for AI: How Much Performance Do I Lose on WSL2?

Author: Content Editorial Team

Reviewer: Technical Infrastructure Team

Last Updated: February 28, 2026

Read Time: 4 minutes

References:

- Microsoft WSL2 Architecture Documentation (Suggested reference for GPU-PV and WDDM claims)

- Nvidia CUDA on WSL User Guide (Suggested reference for direct driver stack and virtualization layer concepts)

Quick Answer: WSL2 performance for AI

Running artificial intelligence tasks locally using Windows Subsystem for Linux 2 (WSL2) offers an incredibly convenient environment for developers, but it introduces measurable performance trade-offs compared to a native Linux installation. While WSL2 is highly capable for standard inference, prototyping, and everyday deep learning tasks, its lightweight virtual machine architecture creates specific bottlenecks. Users typically experience a slight VRAM overhead due to GPU paravirtualization and a minor compute translation penalty. For practitioners pushing the absolute limits of their hardware, understanding these hidden costs is essential to maintaining efficient workflows without abandoning the Windows ecosystem.

In most practical scenarios, the most severe performance degradation in WSL2 does not come from GPU virtualization, but rather from file I/O latency. Accessing training datasets located on a Windows NTFS drive across the OS boundary causes massive data loading delays. To achieve optimal performance, datasets must be stored entirely within the native WSL ext4 filesystem. Ultimately, native Linux remains the superior choice for users demanding maximum VRAM availability, multi-GPU stability, and the absolute highest throughput for multi-day training runs, whereas WSL2 excels in convenience for single-GPU inference and shorter fine-tuning operations.

WSL2 performance for AI- Running AI locally is no longer a niche workflow. Between LLM inference, Stable Diffusion, LoRA fine-tuning, and custom training, many developers now expect their personal workstation to behave like a small research box. That brings an old question back to the front: should you run native Linux, or use Windows with WSL2—and what does WSL2 cost you in real performance?

WSL2 has matured a lot. For many everyday AI tasks it feels “close enough” to Linux. But it isn’t free: WSL2 is a lightweight virtual machine (VM) under the hood, and AI workloads are excellent at exposing small inefficiencies—especially when you’re pushing VRAM limits or streaming huge datasets.

The VRAM “Tax”: Why WSL2 Often Fits Slightly Less

For deep learning, VRAM is the hard ceiling. If the model (plus activations, KV cache, optimizer states, etc.) doesn’t fit, you’ll hit OOM or fall back to slower paths.

WSL2 uses GPU paravirtualization (GPU-PV) through Hyper-V/WDDM. In practice, many users observe that the same workload can report higher VRAM usage on WSL2 than on bare-metal Linux. The exact number varies by driver version, GPU, and framework, but it’s common to feel like you “lost” some headroom.

What that means in real life:

- On a GPU you’re already filling to the edge (e.g., 12GB/16GB cards, or 24GB cards with large context windows), even ~0.5–1.5GB less usable VRAM can be the difference between loading a model successfully and crashing with OOM.

- If you typically run with comfortable margins, you may never notice.

Compute Overhead: Sometimes Small, Sometimes Noticeable

On native Linux, CUDA workloads talk to Nvidia’s Linux driver stack directly. On WSL2, CUDA calls still run on the GPU, but they pass through a virtualization layer that maps Linux-side expectations onto the Windows driver model.

What you should expect

- Inference / prototyping: often near-native, especially if you’re not saturating I/O and not VRAM-bound.

- Long, heavy training or multi-day fine-tuning: overhead can become more visible. Depending on the workload, it’s not unusual to see a single-digit to low double-digit % gap, and in some scenarios it can be worse.

Instead of assuming a fixed penalty, it’s better to think like this:

- If you’re compute-bound (big matmuls, high GPU utilization, minimal CPU/I/O stalls), WSL2 may be fairly close.

- If you’re pipeline-bound (data loading, lots of small file reads, CPU transforms, frequent host↔device synchronization), WSL2 can lose more.

A simple way to model the impact on long runs:

So if a job takes 100 hours on Linux and you measure 20% overhead on WSL2:

That’s a full day lost—worth caring about if you train often.

The Silent Killer: File I/O Across the Windows ↔ Linux Boundary

Many “WSL2 is slow” stories are not about GPU at all—they’re about where the dataset lives.

WSL2 has its own Linux filesystem (ext4 inside a VHD). When you operate inside that filesystem, performance can be solid. The trap is putting datasets on the Windows NTFS drive and accessing them via:

/mnt/c/...(or/mnt/d/...)

Crossing that boundary is slower, especially for millions of small files (common in vision datasets) and metadata-heavy workloads. Your GPU ends up waiting for batches, and utilization drops.

Best practice: keep training data inside WSL’s Linux filesystem.

# Copy datasets into the WSL ext4 filesystem before training

cp -r /mnt/c/Users/Developer/AI_Datasets ~/native_datasets

If storage space is tight, consider restructuring datasets into larger shard files (e.g., WebDataset/tar shards) to reduce small-file overhead.

Stability and Feature Gaps That Matter in “Serious” Setups

WSL2 is excellent, but native Linux still wins for certain advanced or production-like needs:

- Multi-GPU training (NCCL quirks, topology, edge cases): often smoother on Linux.

- High-performance networking (RDMA/InfiniBand): generally a Linux-first story.

- Tighter control of kernel, drivers, and system services: easier on Linux.

- Headless/server-style operation: Linux is still the default.

For a single-GPU workstation doing local fine-tunes and inference, WSL2 is usually viable—just not always optimal.

How to Get the Best Performance on WSL2 (Quick Checklist)

-

Keep code + data inside WSL (ext4)

Avoid training from/mnt/c/whenever possible. -

Update WSL and GPU support

wsl --update- Use current Nvidia drivers that explicitly support CUDA on WSL.

-

Give WSL2 enough resources Configure

.wslconfig(CPU/RAM/swap) if you see memory pressure or paging. -

Measure instead of guessing

- Track GPU utilization and memory:

nvidia-smi - Benchmark your actual training loop (tokens/sec, images/sec, step time), not synthetic FLOPS.

- Track GPU utilization and memory:

Choosing Between Native Linux and WSL2 (Practical Rule)

WSL2 is a great choice if:

- you need Windows for work apps, Office, Adobe tools, enterprise VPNs, or gaming

- your AI work is mostly inference, experimentation, short fine-tunes

- you value convenience more than absolute throughput

Native Linux is still the best choice if:

- you train frequently, for long durations, and time-to-result matters

- you’re VRAM-limited and every GB of headroom counts

- you run multi-GPU training or want maximum system-level predictability

If you tell me your GPU model, framework (PyTorch/TensorFlow), and whether your dataset currently sits in /mnt/c or inside WSL, I can estimate the likely bottleneck and suggest the highest-impact changes.

Expert Quotes

“In most scenarios involving local LLM inference or brief prototyping, the compute overhead of WSL2 is virtually unnoticeable. However, for multi-day training runs, a small percentage gap in processing speed can easily compound into significant lost time.” — Data Science Operations Team

“The VRAM tax introduced by GPU paravirtualization means you will typically lose a slight amount of usable memory. When working with large models that push your hardware limits to the edge, this missing capacity can trigger out-of-memory errors that simply would not occur on a bare-metal Linux environment.” — Hardware Testing Team

“We usually find that I/O bottlenecks across the Windows and Linux file system boundary cause much more severe performance drops than the actual GPU compute translation. It is highly recommended to always migrate your training datasets directly into the native WSL ext4 environment before initiating a run.” — Infrastructure Architecture Team

Last update at December 2025