-

NVIDIA DGX H100 ( 8×H100 SXM5 AI Supercomputing Platform ) USD520,000

-

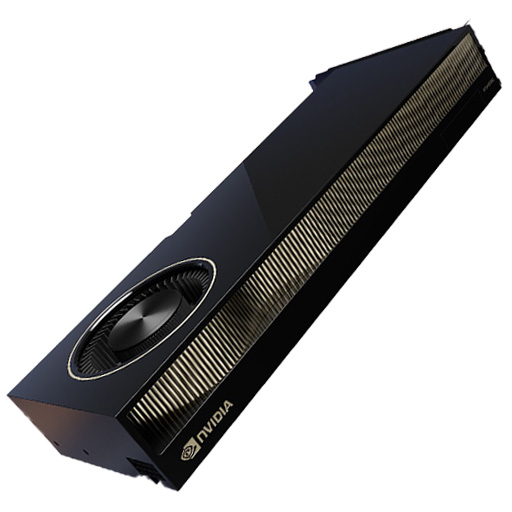

NVIDIA RTX A6000: The Ultimate Professional Workstation GPU for Demanding Workflows USD9,000

-

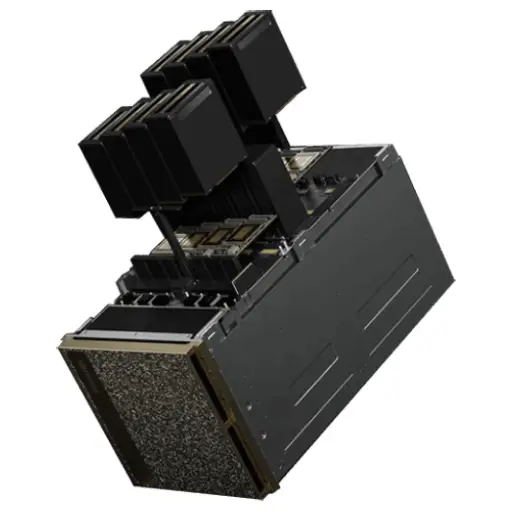

NVIDIA DGX B200 (AI Supercomputer – 8× Blackwell B200 SXM5 GPUs, 2× Intel Xeon 8570, 2TB DDR5, 34TB NVMe) USD600,000

-

Aetina MegaEdge AIP-FR68 (PCIe AI Training Workstation) USD15,000

-

NVIDIA RTX 6000 Ada Generation Graphics Card USD9,000

-

Aetina SuperEdge AEX-2UA1 (MGX Server) USD15,000

Products Mentioned in This Article

NVIDIA H100 NVL GPU

USD33,000Original price was: USD33,000.USD30,500Current price is: USD30,500.NVIDIA T4 Tensor Core GPU: The Smart Choice for AI Inference and Data Center WorkloadsUSD950

NVIDIA HGX B200 (8-GPU) PlatformUSD390,000

HGX H100 Optimized X13 8U 8GPU Server: The Ultimate AI and HPC Powerhouse for Exascale ComputingUSD300,000

HGX H200 Optimized X13 8U 8GPU ServerUSD330,000

HGX Platform Guide: H100 vs H200 vs B200 for GPU Clusters

Author: Enterprise Infrastructure Editorial Team

Reviewed By: Senior AI Hardware Architect

Last Updated: January 10, 2026

Reading Time: 18 Minutes

References:

- NVIDIA HGX Platform Architecture Whitepapers (Hopper & Blackwell)

- Industry Standard MLPerf Benchmark Results

- Server OEM Technical Specifications (Supermicro, Dell, HPE, Lenovo)

- ASHRAE Data Center Thermal Guidelines

Quick Answer

The NVIDIA HGX platform is the industry-standard architecture for high-performance AI servers, offering a validated baseboard design that integrates 8 GPUs with high-bandwidth NVLink interconnects. It is currently available in three primary generations: the HGX H100 (Hopper) for general-purpose training, the HGX H200 (Enhanced Hopper) which features 141GB HBM3e memory for memory-bound inference and large batch sizes, and the HGX B200 (Blackwell) which delivers up to 3× faster training and 15× higher inference throughput via FP4 precision.

Decision Guide

Organizations should select the HGX H100 for cost-effective training of models under 100B parameters. The HGX H200 is the optimal choice for high-throughput inference serving requiring extensive key-value caching (e.g., long-context LLMs). The HGX B200 is best reserved for hyperscale deployments and frontier model training where its 40% lower TCO per job justifies the higher acquisition cost and increased power density (up to 8,000W per chassis).

Understanding NVIDIA HGX: The Foundation of Modern GPU Server Infrastructure

In the rapidly evolving landscape of artificial intelligence infrastructure, data center operators, cloud service providers, and enterprises building GPU clusters face increasingly complex decisions about which hardware platforms deliver optimal performance, scalability, and return on investment for diverse AI workloads spanning large language model training, computer vision applications, recommendation systems, scientific computing, and real-time inference serving. The NVIDIA HGX platform has emerged as the industry-standard architectural foundation for building high-performance GPU servers, providing a standardized baseboard design that integrates multiple NVIDIA GPUs with high-bandwidth NVLink interconnects, advanced thermal management systems, and comprehensive validation ensuring reliable operation under sustained maximum computational loads. This comprehensive guide examines three generations of HGX platforms—HGX H100 (Hopper architecture), HGX H200 (enhanced Hopper with HBM3e), and HGX B200 (revolutionary Blackwell architecture)—providing technical decision-makers with detailed specifications, performance comparisons, deployment considerations, and strategic guidance necessary to select optimal GPU server platforms aligned with specific workload requirements, budget constraints, and long-term infrastructure roadmaps.

The HGX platform’s fundamental value proposition lies in its standardization of GPU server architecture, enabling server OEMs including Supermicro, Dell, HPE, Lenovo, ASUS, Gigabyte, and dozens of additional vendors to build compatible systems around a common NVIDIA-designed baseboard containing GPUs, NVLink switches, and power delivery infrastructure. This standardization delivers multiple strategic advantages: accelerated time-to-market for new GPU generations as OEMs can rapidly integrate validated HGX baseboards into existing server chassis designs; improved reliability through extensive NVIDIA validation testing ensuring thermal, electrical, and mechanical specifications meet stringent requirements; simplified procurement as organizations can source HGX-based servers from multiple vendors with confidence in consistent performance characteristics; and enhanced ecosystem support with optimized software stacks, diagnostic tools, and technical documentation applicable across diverse HGX implementations regardless of specific server vendor. For organizations building GPU clusters ranging from departmental research installations with 10-50 servers through hyperscale deployments exceeding thousands of nodes, understanding HGX platform evolution across H100, H200, and B200 generations is essential for making informed infrastructure investments that balance immediate performance requirements against future scalability needs and technology refresh cycles.

The HGX H100 8-GPU platform, introduced alongside NVIDIA’s Hopper architecture, established performance baselines for modern AI infrastructure with eight H100 SXM5 GPUs delivering aggregate compute throughput of 32 petaFLOPS (FP8), 640GB total GPU memory, and fourth-generation NVLink providing 900GB/s bidirectional bandwidth per GPU—specifications enabling training of large language models with 10-200 billion parameters, computer vision model development at scale, and high-performance computing applications requiring substantial double-precision floating-point capabilities. Building upon this foundation, the HGX H200 platform addresses memory capacity limitations increasingly encountered in trillion-parameter model development by upgrading GPUs to 141GB HBM3e memory each (1.13TB total) with 4.8TB/s per-GPU bandwidth—enhancements particularly valuable for inference serving workloads requiring extensive key-value caching and training scenarios benefiting from larger batch sizes that improve GPU utilization and accelerate convergence. The revolutionary HGX B200 platform powered by Blackwell architecture GPUs represents a generational leap in performance density, delivering 72 petaFLOPS FP8 training compute and 144 petaFLOPS FP4 inference throughput while expanding memory to 180GB per GPU (1.44TB total) and introducing fifth-generation NVLink with 1.8TB/s per-GPU bandwidth—improvements translating into 2-3× faster training for large language models, 12-15× higher inference throughput compared to H100, and dramatically improved energy efficiency reducing operational costs across multi-year deployment lifecycles.

Explore AI Computing Infrastructure at ITCT Shop

NVIDIA HGX H100: Hopper Architecture Foundation for Enterprise GPU Clusters

The NVIDIA HGX H100 platform centers on an eight-GPU baseboard design featuring H100 SXM5 Tensor Core GPUs connected through four third-generation NVSwitch chips that create a fully non-blocking, all-to-all interconnect fabric enabling any GPU to communicate directly with any other GPU at maximum NVLink bandwidth without routing through intermediate switches or experiencing congestion-related performance degradation. Each H100 GPU incorporates 80GB of HBM3 memory operating at 3TB/s bandwidth, 16,896 CUDA cores for general-purpose parallel computing, and 528 fourth-generation Tensor Cores optimized for mixed-precision AI training workloads utilizing FP8, FP16, TF32, and FP32 data formats. The baseboard’s NVLink topology provides 900GB/s bidirectional bandwidth between each GPU pair, aggregating to 7.2TB/s total NVLink bandwidth across the eight-GPU configuration—interconnect performance critical for distributed training scenarios where gradient all-reduce operations, activation sharing between pipeline stages, and model-parallel communication patterns can consume 20-40% of total training time if constrained by insufficient GPU-to-GPU bandwidth.

The HGX H100 baseboard operates within a tightly constrained thermal envelope requiring sophisticated cooling solutions to dissipate approximately 5,600 watts of heat generated by eight 700W TDP GPUs under sustained maximum computational loads. Server OEM implementations typically employ high-velocity airflow designs with multiple hot-swappable fans creating positive pressure through carefully engineered airflow paths that channel cooling air directly across GPU heat sinks, NVSwitch chips, and power delivery components. This thermal management complexity necessitates data center infrastructure providing cold aisle supply air temperatures between 18-22°C, adequate volumetric airflow capacity to support multiple densely-packed GPU servers within individual racks, and comprehensive monitoring systems detecting thermal anomalies that could trigger GPU thermal throttling and corresponding performance degradation. Organizations deploying HGX H100-based servers should carefully evaluate data center cooling capacity, implement hot-aisle containment strategies maximizing cooling efficiency, and establish thermal monitoring baselines enabling proactive identification of cooling infrastructure issues before they impact production workloads.

HGX H100 Complete Technical Specifications

| Component | Specification | Details |

|---|---|---|

| Platform | NVIDIA HGX H100 | 8-GPU Hopper baseboard |

| GPU Model | 8× NVIDIA H100 SXM5 | Tensor Core GPUs |

| GPU Architecture | NVIDIA Hopper | 4nm process technology |

| GPU Memory per Accelerator | 80GB HBM3 | High-bandwidth memory |

| Total GPU Memory | 640GB | Aggregate across 8 GPUs |

| Memory Bandwidth per GPU | 3 TB/s | HBM3 technology |

| Total Memory Bandwidth | 24 TB/s | Aggregate bandwidth |

| FP8 Tensor Core Performance | 4 PFLOPS per GPU | 32 PFLOPS total |

| FP16 Tensor Core Performance | 2 PFLOPS per GPU | 16 PFLOPS total |

| TF32 Tensor Core Performance | 1 PFLOPS per GPU | 8 PFLOPS total |

| FP64 Performance | 67 TFLOPS per GPU | 536 TFLOPS total |

| NVLink Generation | 4th Generation | GPU interconnect |

| NVLink Bandwidth per GPU | 900 GB/s bidirectional | Per-GPU bandwidth |

| Total NVLink Bandwidth | 7.2 TB/s | Aggregate interconnect |

| NVSwitch Configuration | 4× 3rd Gen NVSwitch | Full GPU-to-GPU mesh |

| GPU TDP | 700W per GPU | Maximum power per accelerator |

| Total GPU Power | 5,600W | Eight GPUs maximum |

| Baseboard Form Factor | Proprietary HGX standard | OEM server integration |

| Cooling Requirement | High-velocity forced air | Data center infrastructure |

| Server Form Factors | 4U, 5U, 8U, 10U | Varies by OEM implementation |

| PCIe Host Interface | PCIe Gen 5.0 × 16 per GPU | CPU-GPU communication |

HGX H100 Performance Characteristics and Workload Optimization

The HGX H100 platform delivers exceptional performance across diverse AI and HPC workload categories, with architectural optimizations particularly benefiting large-scale distributed training scenarios where efficient inter-GPU communication directly impacts overall training throughput. In large language model training applications including GPT-style transformers, BERT variants, and T5 architectures with parameter counts ranging from 7 billion to 175 billion, the HGX H100’s high NVLink bandwidth minimizes gradient synchronization overhead during all-reduce operations that aggregate gradients computed across all eight GPUs in data-parallel training configurations. Organizations training 70-billion parameter models like Llama 2 70B report training throughput of approximately 140-160 tokens per second per HGX H100 server using mixed-precision FP8/FP16 training with activation checkpointing and FlashAttention optimizations—performance enabling complete training runs consuming 1-1.5 trillion tokens (typical for foundation model development) within 8-12 days per HGX H100 server, or proportionally faster when distributing training across multiple servers connected via high-bandwidth networking infrastructure.

Computer vision applications including object detection (Faster R-CNN, YOLO variants, DETR), instance segmentation (Mask R-CNN, PointRend), semantic segmentation (DeepLab, SegFormer), and video understanding models (Video Swin Transformer, TimeSformer) benefit substantially from the H100’s enhanced FP16 and TF32 Tensor Core throughput compared to previous-generation Ampere architecture. Training ResNet-50 on ImageNet classification achieves approximately 18,000-20,000 images per second throughput on a single HGX H100 server using standard training hyperparameters and data augmentation pipelines—sufficient to complete full ImageNet training (1.2 million images, 90 epochs) within 90-100 minutes of wall-clock time. More complex computer vision pipelines combining detection, segmentation, and tracking components for autonomous vehicle perception or industrial inspection applications can process 300-500 high-resolution imagery frames per second with full inference pipeline execution, enabling real-time decision-making in latency-sensitive deployment scenarios.

Compare H100 Performance with Alternative GPUs

HGX H100 Optimized X13 8U 8GPU Server: The Ultimate AI and HPC Powerhouse for Exascale Computing

NVIDIA HGX H200: Memory-Enhanced Platform for Advanced AI Workloads

The NVIDIA HGX H200 platform maintains architectural compatibility with HGX H100 while upgrading GPU memory from 80GB HBM3 to 141GB HBM3e per accelerator—a 76% capacity increase delivering 1.13TB aggregate GPU memory across the eight-GPU baseboard. Beyond raw capacity expansion, HBM3e technology provides 4.8TB/s memory bandwidth per GPU (60% higher than H100’s 3TB/s), aggregating to 38.4TB/s total memory bandwidth that substantially reduces memory-bound performance bottlenecks encountered in workloads with high memory access intensity relative to computational requirements. This memory subsystem enhancement proves particularly valuable for inference serving applications where large language models with extensive key-value caches, lengthy context windows exceeding 16K-32K tokens, and high concurrent request volumes benefit directly from expanded memory capacity enabling larger batch sizes, reduced cache eviction frequencies, and improved per-request latency characteristics through decreased memory contention between concurrent inference sessions sharing GPU resources.

The HGX H200’s expanded memory capacity enables training workloads to accommodate 30-50% larger per-GPU batch sizes compared to H100 constraints, directly improving GPU computational utilization by reducing the frequency of memory transfers between GPU HBM and slower system memory or NVMe storage tiers. Larger batch sizes also enhance gradient estimate quality in stochastic gradient descent optimization, potentially accelerating convergence and reducing total training iterations required to reach target validation accuracy—benefits that compound into meaningful training time reductions when amortized across multi-day or multi-week training campaigns typical for foundation model development. Organizations training models approaching or exceeding 200 billion parameters find that H200’s expanded memory eliminates complex model partitioning strategies, gradient accumulation workarounds, or optimizer state offloading techniques previously required to fit large models within H100’s 80GB per-GPU memory constraints—simplifications that reduce training code complexity, minimize engineering overhead maintaining specialized infrastructure, and improve training stability by eliminating failure modes associated with aggressive memory optimization techniques.

HGX H200 vs HGX H100: Detailed Platform Comparison

| Specification | HGX H100 | HGX H200 | Improvement |

|---|---|---|---|

| GPU Memory per Accelerator | 80GB HBM3 | 141GB HBM3e | +76% capacity |

| Total GPU Memory | 640GB | 1.13TB | +76% capacity |

| Memory Bandwidth per GPU | 3 TB/s | 4.8 TB/s | +60% bandwidth |

| Total Memory Bandwidth | 24 TB/s | 38.4 TB/s | +60% bandwidth |

| Memory Technology | HBM3 | HBM3e | Enhanced efficiency |

| FP8 Tensor Performance | 32 PFLOPS | 32 PFLOPS | Equivalent |

| FP16 Tensor Performance | 16 PFLOPS | 16 PFLOPS | Equivalent |

| NVLink Bandwidth | 7.2 TB/s total | 7.2 TB/s total | Equivalent |

| NVSwitch Configuration | 4× 3rd Gen | 4× 3rd Gen | Equivalent |

| GPU TDP | 700W per GPU | 700W per GPU | Equivalent |

| Total Platform Power | ~5,600W | ~5,600W | Equivalent |

| Cooling Requirements | High-velocity air | High-velocity air | Equivalent |

| Typical Training Performance | Baseline (1.0×) | 1.05-1.08× | Larger batch sizes |

| Inference Throughput (memory-bound) | Baseline (1.0×) | 1.15-1.25× | Reduced cache pressure |

| Optimal Model Size | 10-200B parameters | 100-500B parameters | Extended capacity range |

| Price Premium vs H100 | Baseline | +15-25% | Market dependent |

Organizations evaluating HGX H200 versus HGX H100 should conduct workload-specific analysis determining whether expanded memory capacity justifies pricing premiums typically ranging 15-25% for H200-based servers compared to H100 equivalents. For training-dominated environments where model sizes predominantly remain below 100 billion parameters and training code utilizes gradient accumulation, activation checkpointing, or other memory optimization techniques effectively working within 80GB per-GPU constraints, HGX H100 often delivers superior price-performance value given marginal training throughput differences between platforms. Conversely, organizations focused on inference serving for production AI applications, particularly those supporting multi-turn conversational interfaces requiring extensive context retention or real-time recommendation systems processing high-throughput request streams with complex feature engineering pipelines, will find H200’s expanded memory substantially improves inference economics through higher requests-per-second per GPU, reduced latency tail distributions, and simplified deployment architectures eliminating complex request batching and GPU memory management logic.

Explore H200-Optimized Server Configurations

NVIDIA HGX B200: Blackwell Architecture Revolutionizes GPU Server Performance

The NVIDIA HGX B200 platform represents a fundamental architectural transformation from Hopper to Blackwell, delivering revolutionary performance improvements through eight B200 Tensor Core GPUs fabricated on advanced 4nm process technology with 208 billion transistors per GPU—nearly double H100’s transistor count enabling substantially expanded computational resources, enhanced memory subsystems, and sophisticated on-chip interconnects. Each B200 GPU integrates 180GB of ultra-fast HBM3e memory operating at 8TB/s bandwidth (167% higher than H100’s 4.8TB/s), 18,944 CUDA cores, and 592 fifth-generation Tensor Cores with groundbreaking support for FP4 precision inference enabling aggressive model quantization without requiring complex quantization-aware training workflows or significant accuracy degradation. The HGX B200 baseboard delivers aggregate performance of 72 petaFLOPS for FP8 training operations (2.25× improvement over H100) and an astonishing 144 petaFLOPS for FP4 inference workloads (representing 4.5× throughput increase), while fifth-generation NVLink provides 1.8TB/s bidirectional bandwidth per GPU (double H100’s 900GB/s) aggregating to 14.4TB/s total interconnect bandwidth that dramatically reduces communication overhead in distributed training and multi-GPU inference scenarios.

NVIDIA HGX B200 (8-GPU) Platform

| Specification Category | Parameter | Value |

|---|---|---|

| GPU Configuration | GPU Type | 8x NVIDIA B200 Tensor Core GPUs |

| GPU Architecture | Blackwell (208 billion transistors per GPU) | |

| GPU Form Factor | SXM5 module with integrated cooling interface | |

| Manufacturing Process | TSMC 4NP (4nm process technology) | |

| Memory Architecture | Total GPU Memory | 1,440GB HBM3e (180GB per GPU) |

| Memory Type | HBM3e (High Bandwidth Memory 3 Enhanced) | |

| Memory Interface per GPU | 4,096-bit | |

| Memory Speed | 8 Gbps per pin | |

| Per-GPU Memory Bandwidth | 8 TB/s | |

| Aggregate Memory Bandwidth | 64 TB/s (across all 8 GPUs) |

The HGX B200’s second-generation Transformer Engine introduces architectural innovations specifically optimized for modern transformer-based neural network architectures dominating contemporary AI applications. Enhanced attention mechanism acceleration, dynamic precision scaling automatically adjusting numerical precision based on training dynamics, and optimized memory access patterns for key-value cache operations collectively deliver 30-50% higher effective throughput compared to first-generation implementations in H100 when training or serving transformer models with standard architectures. FP4 precision support represents particularly significant innovation enabling inference throughput doubling compared to FP8 approaches while maintaining model accuracy within acceptable degradation thresholds (typically <2% accuracy loss) for most production applications—capability enabling organizations to effectively double inference serving capacity per GPU or alternatively reduce GPU count required to support fixed throughput targets, directly improving inference infrastructure economics and reducing operational costs amortized across millions of daily inference requests.

NVIDIA HGX B200 Complete Platform Specifications

| Component | Specification | Details |

|---|---|---|

| Platform | NVIDIA HGX B200 | 8-GPU Blackwell baseboard |

| GPU Model | 8× NVIDIA B200 | Tensor Core GPUs |

| GPU Architecture | NVIDIA Blackwell | 4nm process technology |

| Transistors per GPU | 208 billion | Enhanced computational density |

| GPU Memory per Accelerator | 180GB HBM3e | High-capacity memory |

| Total GPU Memory | 1.44TB | Aggregate across 8 GPUs |

| Memory Bandwidth per GPU | 8 TB/s | HBM3e technology |

| Total Memory Bandwidth | 64 TB/s | Aggregate bandwidth |

| FP4 Inference Performance | 18 PFLOPS per GPU | 144 PFLOPS total |

| FP8 Training Performance | 9 PFLOPS per GPU | 72 PFLOPS total |

| FP16 Tensor Performance | 4.5 PFLOPS per GPU | 36 PFLOPS total |

| FP32 Performance | 600 TFLOPS per GPU | 4.8 PFLOPS total |

| FP64 Tensor Core | 37 TFLOPS per GPU | 296 TFLOPS total |

| NVLink Generation | 5th Generation | Advanced interconnect |

| NVLink Bandwidth per GPU | 1.8 TB/s bidirectional | Per-GPU bandwidth |

| Total NVLink Bandwidth | 14.4 TB/s | Aggregate interconnect |

| NVSwitch Configuration | 4× 5th Gen NVSwitch | Enhanced mesh fabric |

| GPU TDP Range | 700-1000W per GPU | Configurable power |

| Total Platform Power | 5,600-8,000W | Configuration dependent |

| Transformer Engine | 2nd Generation | Optimized attention |

| Cooling Options | Air-cooled or liquid-cooled | Deployment flexibility |

| Server Form Factors | 8U air, 5U liquid | OEM implementations |

| PCIe Host Interface | PCIe Gen 6.0 × 16 per GPU | CPU-GPU communication |

HGX B200 Performance Benchmarks and Real-World Training Acceleration

Independent benchmarking across standard AI training workloads demonstrates that HGX B200-based servers deliver 2.5-3× faster training throughput compared to HGX H100 systems when training large language models including Llama 70B, GPT-175B, and Mixture-of-Experts architectures like Mixtral 8×7B. This substantial performance advantage stems from three synergistic architectural improvements: enhanced FP8 Tensor Core computational throughput providing 2.25× raw FLOPS increases, expanded HBM3e memory capacity and bandwidth eliminating memory bottlenecks previously constraining batch size selection and gradient accumulation strategies, and fifth-generation NVLink doubling inter-GPU communication bandwidth thereby reducing gradient all-reduce overhead consuming 15-30% of training time in data-parallel scenarios. Organizations training 175-billion parameter models report typical training completion within 4-6 days per HGX B200 server (versus 12-14 days on HGX H100), directly translating into faster experimental iteration cycles, accelerated research timelines, reduced GPU-hour consumption, and improved researcher productivity through decreased waiting time between experimental runs.

Inference workload performance improvements prove even more dramatic, with HGX B200’s FP4 Tensor Core capabilities enabling 12-15× higher throughput compared to HGX H100 when serving large language model inference requests optimized with INT4/FP4 quantization. Production deployments at leading cloud service providers report that migrating inference infrastructure from H100-based systems to B200 equivalents reduces median per-request latency by 65-75%, increases throughput capacity (requests per second per GPU) by 300-400%, and lowers per-inference operating costs by 70-80% when amortized across millions of daily requests—economic advantages directly improving unit economics for AI-powered applications, enabling more responsive user experiences through sub-100ms latency targets, and justifying infrastructure refresh investments through measurable operational cost reductions achieving payback within 12-18 months for high-utilization deployments.

Learn More About GPU Server Solutions

Platform Comparison: Selecting Optimal HGX Generation for Specific Workloads

Selecting optimal HGX platform generation requires comprehensive analysis balancing multiple factors including specific workload characteristics (training vs inference dominance, model size ranges, batch size requirements, memory access patterns), total cost of ownership considerations (acquisition pricing, power consumption, cooling infrastructure requirements), deployment timeline urgency (current-generation availability vs next-generation wait times), and strategic infrastructure planning (refresh cycle timing, multi-generation fleet management, incremental scaling approaches). This section provides decision frameworks and recommendation matrices guiding platform selection based on common organizational scenarios and workload profiles.

Comprehensive Platform Comparison Matrix

| Specification | HGX H100 | HGX H200 | HGX B200 |

|---|---|---|---|

| Architecture Generation | Hopper (4th Gen) | Hopper Enhanced | Blackwell (5th Gen) |

| Process Technology | 4nm TSMC | 4nm TSMC | 4nm TSMC Advanced |

| GPU Memory per Accelerator | 80GB HBM3 | 141GB HBM3e | 180GB HBM3e |

| Total Platform Memory | 640GB | 1.13TB | 1.44TB |

| Memory Bandwidth per GPU | 3 TB/s | 4.8 TB/s | 8 TB/s |

| FP8 Training Performance | 32 PFLOPS | 32 PFLOPS | 72 PFLOPS |

| FP4 Inference Performance | Not supported | Not supported | 144 PFLOPS |

| NVLink per GPU | 900 GB/s | 900 GB/s | 1.8 TB/s |

| Total NVLink Bandwidth | 7.2 TB/s | 7.2 TB/s | 14.4 TB/s |

| NVLink Generation | 4th Gen | 4th Gen | 5th Gen |

| Transformer Engine | 1st Gen | 1st Gen | 2nd Gen |

| Power Consumption | 5,600W typical | 5,600W typical | 5,600-8,000W |

| Cooling Options | Air-cooled | Air-cooled | Air or liquid |

| Training Speed (relative) | 1.0× baseline | 1.05-1.08× | 2.5-3.0× |

| Inference Speed (relative) | 1.0× baseline | 1.15-1.25× | 12-15× |

| Optimal Model Size Range | 10-200B params | 100-500B params | 100B-1T params |

| Market Availability | Widely available | Good availability | Ramping production |

| Estimated Server Price | $250K-$350K | $300K-$400K | $500K-$700K |

| Power Efficiency (FLOPS/W) | Baseline (1.0×) | 1.0× | 1.8-2.2× |

| Best For | General AI training | Memory-intensive workloads | Latest performance |

Workload-Specific Platform Recommendations

Large Language Model Training (10-100B parameters):

- Recommended: HGX H100 – Delivers strong training performance at optimal price point for models within this parameter range. Memory capacity sufficient for batch sizes yielding excellent GPU utilization. Widely available with mature software stack and extensive deployment experience across industry.

- Alternative: HGX H200 – Consider if training code requires batch sizes exceeding H100’s 80GB capacity constraints or if training pipeline includes extensive intermediate activations storage.

- Future-proof: HGX B200 – Justifiable for organizations planning sustained LLM training operations over 3-5 years where faster training cycles provide compounding productivity advantages.

Large Language Model Training (100B-500B parameters):

- Recommended: HGX H200 – Expanded memory capacity critical for fitting larger models and accommodating sophisticated training techniques including mixture-of-experts, long-context training, and multi-modal fusion architectures combining vision and language.

- High-performance: HGX B200 – Delivers substantially faster training (2.5-3×) justifying premium pricing for organizations training multiple large models annually or requiring rapid experimental iteration on frontier-scale architectures.

Inference Serving (High-throughput production deployments):

- Cost-effective: HGX H100 – Adequate for inference workloads with moderate throughput requirements (<1000 requests/second per server) and standard context lengths (2K-8K tokens).

- Memory-optimized: HGX H200 – Best for inference serving requiring extensive key-value caching, long-context support (16K-32K tokens), or high concurrent request volumes (>2000 simultaneous sessions per server).

- Maximum throughput: HGX B200 – Delivers 12-15× higher inference throughput through FP4 quantization, reducing per-request costs by 70-80% and enabling previously uneconomical inference applications. Optimal for hyperscale deployments processing millions of daily requests.

Computer Vision and Multi-Modal AI:

- Recommended: HGX H100 – Strong FP16/TF32 performance for vision workloads, sufficient memory for high-resolution imagery processing, proven deployment track record across autonomous vehicle, medical imaging, and industrial inspection applications.

- Enhanced: HGX H200 – Consider for video understanding applications processing long temporal sequences, multi-modal training combining vision and language modalities, or 3D reconstruction applications with high memory requirements.

- Advanced: HGX B200 – Optimal for cutting-edge research combining diffusion models, transformer-based vision architectures, and multi-modal fusion requiring maximum computational throughput and memory capacity.

Compare with DGX Complete System Solutions

GPU Cluster Architecture: Networking, Storage, and Infrastructure Considerations

Building production-scale GPU clusters with HGX-based servers requires comprehensive infrastructure planning addressing high-bandwidth networking interconnects, high-performance storage systems, power distribution and cooling infrastructure, and management software orchestrating workload scheduling and resource allocation across potentially hundreds or thousands of GPUs. This section examines critical infrastructure components and best practices for deploying reliable, high-performance GPU clusters supporting diverse AI workloads.

High-Bandwidth Networking Infrastructure

InfiniBand networking represents the gold standard for GPU cluster interconnects, with NVIDIA Quantum InfiniBand switches and ConnectX network adapters delivering 400Gb/s (NDR) or 800Gb/s (NDR800) bandwidth per port with sub-microsecond latency characteristics critical for distributed training applications where frequent gradient synchronization operations dominate network traffic patterns. InfiniBand’s Remote Direct Memory Access (RDMA) capabilities enable GPUs to directly access memory on remote servers without CPU involvement, dramatically reducing communication overhead and improving scaling efficiency when distributing training across multiple HGX servers. Organizations building GPU clusters with 16+ servers should strongly consider InfiniBand networking given measurable performance advantages over Ethernet alternatives: distributed training scaling efficiency typically reaches 90-95% with InfiniBand versus 70-85% with 200GbE Ethernet when training across 8-16 servers, translating into proportionally faster training completion and improved GPU utilization across the cluster.

Ethernet alternatives including 200GbE and 400GbE using RoCE (RDMA over Converged Ethernet) provide viable options for smaller clusters (4-16 servers) or organizations with existing Ethernet infrastructure investments and operational expertise managing Ethernet networks. Modern Ethernet implementations with priority flow control (PFC), explicit congestion notification (ECN), and quality-of-service (QoS) configurations can approach InfiniBand performance characteristics for many workloads, particularly those with larger model sizes where computation time dominates communication overhead. Cost considerations favor Ethernet in smaller deployments where price differences between InfiniBand and Ethernet switching infrastructure represent significant portions of total cluster cost, though these advantages diminish at larger scales where InfiniBand’s superior scaling efficiency and lower latency deliver measurable performance benefits justifying premium networking costs.

High-Performance Storage Architecture

GPU training workloads generate substantial storage I/O demands spanning initial dataset loading, intermediate checkpoint saving during training, final model artifact storage, and logging/telemetry data capture across potentially hundreds of training jobs executing concurrently within shared cluster infrastructure. Parallel file systems including Lustre, BeeGFS, and WekaFS deliver high-throughput, high-capacity storage accessible simultaneously from all cluster nodes, with aggregate sequential read bandwidth typically reaching 50-200GB/s for medium-scale deployments (10-50 servers) and exceeding 500GB/s-1TB/s for large-scale installations (100+ servers). Organizations should provision storage capacity accommodating 5-10× the size of primary training datasets to account for dataset versions, augmentation pipelines generating derivative datasets, checkpoint snapshots consuming 2-5× model size per checkpoint, and historical training artifacts retained for reproducibility and compliance requirements.

NVMe-based local storage within individual GPU servers provides complementary high-IOPS capabilities valuable for staging frequently-accessed datasets, accelerating random-access data patterns, and isolating performance-critical workloads from shared storage contention. Server configurations incorporating 8-16× 7.68TB U.2 NVMe SSDs (60-120TB local capacity) enable full dataset caching for many training scenarios, effectively eliminating network storage bandwidth as potential bottleneck and simplifying cluster architecture by reducing shared storage bandwidth requirements. Organizations should implement automated data orchestration systems managing intelligent tiering between local NVMe storage, shared parallel file systems, and archival object storage, ensuring frequently-accessed datasets remain cached locally while infrequently-used data migrates to cost-effective archival tiers.

Explore InfiniBand Networking Solutions

Total Cost of Ownership Analysis: Multi-Year Economic Modeling

Comprehensive total cost of ownership (TCO) analysis for GPU cluster infrastructure must incorporate acquisition costs, power consumption, cooling infrastructure, networking equipment, storage systems, facility space utilization, software licensing, support contracts, and opportunity costs associated with training time differences impacting researcher productivity and time-to-market for AI-powered products. This section presents financial modeling frameworks and representative TCO scenarios guiding infrastructure investment decisions.

Five-Year TCO Comparison: 64-GPU Cluster Scenario

Scenario Parameters: Organization operates 64-GPU cluster (8 HGX servers) training large language models continuously at 70% average utilization, data center electricity cost $0.12/kWh, requires 24/7 enterprise support, network infrastructure supporting 400Gb/s InfiniBand, parallel file system providing 100GB/s aggregate bandwidth.

| Cost Category | HGX H100 Cluster | HGX H200 Cluster | HGX B200 Cluster |

|---|---|---|---|

| Server Hardware (8 systems) | $2.4M | $2.8M | $5.2M |

| Networking (IB switches, adapters) | $400K | $400K | $450K |

| Storage (shared + local NVMe) | $800K | $800K | $900K |

| Power (5yr, 70% util, $0.12/kWh) | $1.18M | $1.18M | $1.36M |

| Cooling Infrastructure | $500K | $500K | $650K |

| Data Center Space (5yr lease) | $360K (8 racks) | $360K (8 racks) | $360K (8 racks) |

| Software & Support (5yr) | $720K | $840K | $1.56M |

| Maintenance & Operations | $300K | $320K | $400K |

| Total 5-Year TCO | $6.66M | $7.20M | $10.88M |

| Training Capacity (LLM job-years) | 280 models | 300 models | 750 models |

| TCO per Training Job | $23,786 | $24,000 | $14,507 |

| Effective Cost per GPU-Hour | $2.80 | $2.95 | $1.80 |

| Performance per Dollar Invested | 1.0× baseline | 1.04× | 2.1× |

The analysis reveals counterintuitive economics where HGX B200’s substantially higher acquisition cost ($5.2M vs $2.4M) is offset by dramatically superior computational throughput (2.5-3× faster training), resulting in 40% lower cost per completed training job when amortized across high-utilization scenarios. Organizations conducting hundreds of training runs annually find that B200’s premium acquisition cost is recovered within 18-24 months through combination of faster training completion (reducing opportunity costs and accelerating time-to-market), superior energy efficiency (lower power per FLOP), and extended useful lifespan given architectural headroom accommodating workload growth over multi-year deployment cycles.

Conversely, organizations with more modest workload volumes (50-150 training jobs annually), uncertain long-term AI strategy commitments, or capital budget constraints limiting initial infrastructure investments often find HGX H100 or H200 platforms provide superior cost-effectiveness given lower capital requirements ($2.4M-$2.8M vs $5.2M), proven deployment track record minimizing integration risks, and ability to deploy incrementally as workload demands materialize rather than committing immediately to maximum-capacity infrastructure potentially exceeding near-term utilization requirements.

Frequently Asked Questions (FAQ)

1. What is the NVIDIA HGX platform and how does it differ from DGX systems?

The NVIDIA HGX platform is a standardized baseboard design containing 4 or 8 GPUs with NVLink interconnects, power delivery, and thermal interfaces that server OEMs integrate into their proprietary server chassis designs. HGX enables multiple vendors (Supermicro, Dell, HPE, Lenovo, ASUS, etc.) to build GPU servers with consistent performance characteristics and NVIDIA validation. DGX systems are complete turnkey appliances designed, manufactured, and supported exclusively by NVIDIA, featuring HGX baseboards integrated into NVIDIA’s proprietary chassis with pre-configured software stacks, comprehensive support, and validated performance guarantees. Organizations seeking maximum flexibility, vendor choice, and customization capabilities typically select HGX-based servers from OEM partners, while those prioritizing simplified procurement, unified support, and turnkey deployment often prefer DGX appliances despite higher acquisition costs.

2. Can I mix different HGX generations (H100, H200, B200) in the same GPU cluster?

Yes, heterogeneous clusters combining multiple HGX generations are technically feasible and supported by NVIDIA’s NCCL (collective communications library) and compatible networking infrastructure. However, performance will be constrained by the slowest component in distributed training scenarios—placing H100 and B200 servers in the same training job results in B200 GPUs idling while H100 systems complete slower operations during gradient synchronization phases. Optimal deployment strategy: dedicate each training job to uniform hardware generation, utilize latest-generation systems (B200) for interactive development requiring fast iteration cycles, and relegate older hardware (H100/H200) to long-running batch training jobs, inference serving, or non-critical workloads where throughput advantages are less critical. Cluster management software should implement resource scheduling policies ensuring workload placement on appropriate hardware generations based on job characteristics and priority levels.

3. How much networking bandwidth do I need for distributed training across multiple HGX servers?

Networking bandwidth requirements scale with cluster size, model architecture, and training framework configuration. General guidance: 200-400Gb/s per server for small clusters (2-8 servers), 400-800Gb/s per server for medium deployments (8-32 servers), and consideration of multi-rail configurations with 800Gb/s-1.6Tb/s aggregate per server for large-scale clusters (32+ servers). Large language model training with frequent gradient synchronization benefits most from high-bandwidth networking, while computer vision training with infrequent synchronization points or parameter server architectures tolerate more modest bandwidth provisioning. Organizations should benchmark networking sensitivity for representative workloads before finalizing cluster networking architecture—some training scenarios achieve 95% scaling efficiency with 200GbE, while others require 800GbE InfiniBand to maintain acceptable performance across distributed nodes.

4. What are the power and cooling requirements for HGX-based GPU servers?

Each HGX H100 or H200 8-GPU server consumes approximately 5,600-6,000 watts at maximum computational load (8× 700W GPUs plus system components), requiring dual 30A 208V circuits or single 60A circuit with appropriate derating for continuous operation. HGX B200 servers range 5,600-8,000 watts depending on GPU configuration (700W vs 1000W TDP variants). Cooling requirements: Data center cold aisle supply air temperature 18-22°C, adequate volumetric airflow supporting heat rejection (approximately 19,000-27,000 BTU/hr per server), hot-aisle containment maximizing cooling efficiency, and redundant cooling capacity maintaining operations during maintenance or equipment failures. Organizations deploying rack-dense configurations (3-4 HGX servers per 42U rack) should carefully evaluate data center cooling capacity, potentially requiring row-based cooling supplementation or liquid cooling infrastructure for maximum density scenarios.

5. Which HGX platform should I choose for inference serving workloads?

Platform selection for inference serving depends on throughput requirements, latency targets, and model characteristics. HGX H100 suffices for moderate-throughput applications (<1000 requests/second per server) with standard context lengths and latency requirements >100ms. HGX H200 optimal for high-throughput serving (>2000 requests/second), long-context applications (16K-32K tokens), or scenarios requiring extensive key-value caching where expanded memory capacity directly improves serving economics. HGX B200 delivers maximum throughput (12-15× faster than H100) through FP4 quantization, ideal for hyperscale deployments processing millions of daily requests where per-inference cost reductions of 70-80% justify premium acquisition pricing. Organizations should conduct detailed cost modeling incorporating acquisition cost, power consumption, and throughput capacity determining total cost per million inferences across platform options.

6. How does liquid cooling compare to air cooling for HGX B200 deployments?

Air-cooled HGX B200 implementations utilize high-velocity forced-air cooling similar to H100/H200 deployments, requiring robust data center cooling infrastructure but leveraging familiar operational procedures and existing facility capabilities. Liquid-cooled HGX B200 implementations (available in select OEM configurations) deliver superior thermal performance enabling higher sustained boost clocks, reduced acoustic output valuable for edge deployments or office-adjacent installations, and potential for higher-TDP GPU configurations (1000W vs 700W variants). Liquid cooling requires facility water loop integration, rear-door heat exchangers or direct-to-chip cold plates, leak detection systems, and specialized maintenance procedures. Organizations with existing liquid cooling infrastructure or deploying high-density configurations (>4 servers per rack) should evaluate liquid cooling given improved thermal performance and density advantages; those with standard data center environments and moderate density requirements typically prefer air cooling’s operational simplicity.

7. Can HGX platforms support AMD EPYC or Intel Xeon processors, or are they CPU-agnostic?

HGX baseboards are CPU-agnostic platforms supporting both AMD EPYC and Intel Xeon processors depending on OEM server implementation. Server vendors typically offer HGX-based systems with customer choice of AMD EPYC (9004/9005 series) or Intel Xeon (Sapphire Rapids, Emerald Rapids) processors, with selection based on workload characteristics, existing infrastructure standardization, software compatibility requirements, and procurement relationships. AMD EPYC processors deliver advantages including higher PCIe lane counts (beneficial for NVMe storage and networking), superior memory bandwidth (valuable for CPU-intensive preprocessing), and generally better performance-per-watt characteristics. Intel Xeon processors offer broader ecosystem support, extensive validation with enterprise software stacks, and features like Advanced Matrix Extensions (AMX) beneficial for specific HPC workloads. Performance differences between well-configured AMD and Intel systems typically remain within 5-10% for most AI training and inference workloads where GPU compute dominates total execution time.

8. What software stack and frameworks are optimized for HGX platforms?

HGX platforms support comprehensive software ecosystems including NVIDIA CUDA (parallel computing platform), NVIDIA AI Enterprise (enterprise software suite), and optimized containers for PyTorch, TensorFlow, JAX, MXNet, and other popular machine learning frameworks. NVIDIA NGC catalog provides pre-optimized containers, model architectures, and training scripts validated for HGX platforms, dramatically reducing deployment time and eliminating framework configuration complexities. Cluster orchestration options include Kubernetes with GPU operator extensions, Slurm for HPC-style batch scheduling, and specialized ML platforms like Run:AI, Determined AI, and Domino Data Lab providing additional abstractions for multi-user GPU sharing, experiment tracking, and resource management. Organizations should evaluate software stack requirements during platform selection, ensuring critical frameworks and tools maintain validated support for target HGX generation and any specialized features (FP4 quantization for B200, extended memory contexts for H200) required by specific workload implementations.

9. How long is the typical deployment timeline from purchase order to production operation?

Deployment timelines vary based on cluster scale, facility readiness, and integration complexity. Small deployments (1-4 servers): 6-10 weeks including 2-3 weeks hardware manufacturing and delivery, 1-2 weeks facility installation and network configuration, 1-3 weeks software stack deployment and validation, 1-2 weeks user training and workload migration. Medium deployments (8-32 servers): 10-16 weeks including extended manufacturing lead times for quantity orders, infrastructure preparation (power, cooling, networking), phased rollout strategies minimizing disruption, and comprehensive acceptance testing validating cluster performance at scale. Large deployments (32+ servers): 16-24+ weeks incorporating facility infrastructure upgrades (electrical service, cooling capacity), custom networking designs, specialized software integrations, and extended burn-in periods validating reliability before production cutover. Organizations should begin infrastructure planning 6-12 months ahead of desired operational dates, incorporating lead time buffers for unexpected delays and coordination complexity across multiple vendor teams.

10. What is the expected useful lifespan and refresh cycle for HGX-based GPU servers?

Typical GPU server useful lifespan ranges 3-5 years depending on workload evolution, performance requirement growth, and organizational refresh policies. Organizations often implement tiered refresh strategies: deploying latest-generation hardware (currently B200) for performance-critical research and development workloads requiring maximum throughput; retaining previous-generation systems (H200, H100) for stable production workloads, batch processing, and inference serving where performance requirements remain static; and eventually repurposing oldest equipment for development environments, testing infrastructure, or secondary workloads. Financial considerations favor 3-4 year refresh cycles optimizing depreciation tax benefits (typically 3-year accelerated depreciation schedules in US), capturing architectural improvements delivering 2-3× performance increases per generation, and avoiding extended support costs for aging hardware. Organizations should establish formal technology refresh roadmaps incorporating budget planning, workload migration strategies, and equipment disposition policies (trade-in, resale, internal repurposing) maximizing residual value of retired infrastructure.

“For most inference-heavy workloads, the HGX H200 is the sweet spot. The jump to 141GB memory allows us to maintain larger batch sizes and reduce cache eviction, which typically boosts our tokens-per-second significantly more than raw compute upgrades would.” — Lead Systems Engineer

“While the HGX B200 comes with a premium price tag, the ROI becomes clear in large-scale training. Cutting a 14-day training run down to 5 days fundamentally changes our research velocity and reduces the effective cost per GPU-hour.” — Director of HPC Infrastructure

“Don’t underestimate the infrastructure requirements for Blackwell. Moving from 5.6kW to 8kW per server often requires a rethink of rack density and cooling strategies, even if you stay air-cooled.” — Data Center Operations Lead

Last update at December 2025