-

15.36TB SSD NVMe Palm Disk Unit (7") USD5,300

-

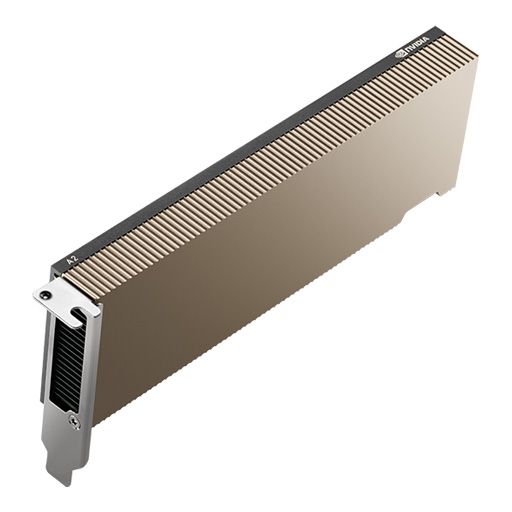

NVIDIA A2 Tensor Core GPU: Entry-Level AI Acceleration for Edge Computing USD1,900

-

NVIDIA A30 Tensor Core GPU: Versatile AI Inference and Mainstream Enterprise Computing

Rated 4.67 out of 5USD6,530 -

H3C UniServer R5300 G6: The Definitive 4U AI Server for Enterprise Workloads USD35,000

-

NVIDIA H200 Tensor Core GPU

USD35,000Original price was: USD35,000.USD31,000Current price is: USD31,000. -

NVIDIA A100 80GB Tensor Core GPU USD15,000

Products Mentioned in This Article

NVIDIA Quantum-2 QM9700USD21,000

NVIDIA H100 NVL GPU

USD33,000Original price was: USD33,000.USD30,500Current price is: USD30,500.NVIDIA HGX B200 (8-GPU) PlatformUSD390,000

HGX H100 Optimized X13 8U 8GPU Server: The Ultimate AI and HPC Powerhouse for Exascale ComputingUSD300,000

HGX H200 Optimized X13 8U 8GPU ServerUSD330,000

InfiniBand Switch Guide: NVIDIA Quantum-2 QM9700 vs QM9790

-

In the rapidly evolving landscape of artificial intelligence and high-performance computing, network infrastructure has become the backbone of breakthrough innovations. The demand for ultra-fast, low-latency networking solutions has never been more critical as organizations scale their AI workloads to unprecedented levels. NVIDIA’s Quantum-2 InfiniBand platform represents the pinnacle of data center networking technology, delivering 400Gb/s throughput that enables AI researchers and data scientists to tackle the world’s most complex computational challenges.

This comprehensive guide examines two flagship models in NVIDIA’s Quantum-2 lineup: the QM9700 and QM9790 InfiniBand switches. Both switches deliver groundbreaking performance with 64 ports of NDR 400Gb/s connectivity in a compact 1U chassis, yet they serve distinct purposes within modern AI infrastructure. Understanding the differences between these models is essential for architects designing next-generation AI clusters, HGX server deployments, and large-scale supercomputing environments.

NVIDIA Quantum-2 QM9700: The Managed InfiniBand Powerhouse

Overview and Key Features

The NVIDIA Quantum-2 QM9700 represents the managed variant of NVIDIA’s cutting-edge InfiniBand switching platform. As an internally managed switch, the QM9700 incorporates sophisticated on-board management capabilities that streamline deployment and operation in enterprise and research environments.

The QM9700 delivers industry-leading specifications that set new benchmarks for data center networking performance. With 64 ports of 400Gb/s InfiniBand connectivity packed into a single 1U chassis, this switch achieves an aggregated bidirectional throughput of 51.2 Tb/s with a remarkable packet processing capacity of 66.5 billion packets per second (BPPS).

Management Architecture

What distinguishes the QM9700 from its counterpart is its integrated management subsystem. The switch features an Intel Core i3 Coffee Lake CPU, 8GB DDR4 system memory, and 16GB M.2 SSD storage, creating a complete embedded computing platform dedicated to network management and control.

This integrated architecture enables the QM9700 to function as a self-contained management node within your infrastructure. The on-board subnet manager supports out-of-the-box deployment for clusters with up to 2,000 nodes, eliminating the need for external management infrastructure in small to medium-sized deployments.

MLNX-OS Software Platform

The QM9700 runs NVIDIA MLNX-OS, a sophisticated InfiniBand switch operating system designed specifically for high-performance data centers. MLNX-OS provides comprehensive management capabilities through multiple interfaces:

- Command-Line Interface (CLI) for direct administrative control

- Web-based User Interface (WebUI) for graphical management

- Simple Network Management Protocol (SNMP) for integration with enterprise monitoring systems

- JavaScript Object Notation (JSON) APIs for programmatic control and automation

This multi-interface approach ensures seamless integration with existing IT infrastructure and automation frameworks, from traditional enterprise monitoring tools to modern DevOps orchestration platforms.

Hardware Specifications

Component Specification Switch ASIC NVIDIA Quantum-2 IC CPU Intel Core i3 Coffee Lake System Memory 8GB DDR4 2666 MT/s SO-DIMM Storage 16GB M.2 SSD SATA Port Configuration 64 × 400Gb/s (32 OSFP connectors) Switching Capacity 25.6 Tbps Aggregate Throughput 51.2 Tb/s bidirectional Packet Processing 66.5 BPPS Form Factor 1U rack mount Dimensions 17.2″ × 1.7″ × 26″ (438mm × 43.6mm × 660mm) Weight 13.6 kg (1 PSU) / 14.8 kg (2 PSU) Power and Environmental Characteristics

The QM9700 demonstrates NVIDIA’s commitment to energy efficiency without compromising performance. Power consumption specifications include:

- Typical Power (with passive cables): 747W

- Maximum Power (with active cables): 1,720W

- Power Supply: Dual 1+1 redundant hot-swappable PSUs

- Input Voltage: 200-240VAC, 50/60Hz

- Efficiency: 80 GOLD+ and ENERGY STAR certified

Environmental operating parameters ensure reliable operation across diverse data center conditions:

- Operating Temperature: 0°C to 35°C (forward airflow) / 0°C to 40°C (reverse airflow)

- Storage Temperature: -40°C to 70°C

- Humidity: 10% to 85% non-condensing (operating)

- Maximum Altitude: 3,050 meters

Management Ports and Connectivity

The QM9700 provides comprehensive connectivity options for management and monitoring:

- 1× USB 3.0 port for local management and firmware updates

- 1× USB port for I2C channel access

- 1× RJ45 Ethernet port for out-of-band management network

- 1× RJ45 UART port for serial console access

Quantum-2 QM9790 64×400Gb/s: The Externally Managed Solution

Design Philosophy and Target Use Cases

The NVIDIA Quantum-2 QM9790 takes a fundamentally different approach to network management. As an externally managed switch, the QM9790 eliminates the on-board management subsystem, relying instead on NVIDIA’s Unified Fabric Manager (UFM) platform for centralized control and orchestration.

NVIDIA Quantum-2 QM9790 InfiniBand Switch 64-Port 400Gb/s NDR

USD26,000The NVIDIA Quantum-2 QM9790 InfiniBand Switch represents the pinnacle of data center networking innovation, delivering unprecedented performance for artificial intelligence (AI), high-performance computing (HPC), and cloud computing applications. As the industry-leading switch platform in both power efficiency and port density, the QM9790 provides AI developers and scientific researchers with the highest networking performance available to tackle the world's most challenging computational problems.This architectural decision makes the QM9790 ideal for large-scale deployments where centralized management, advanced analytics, and unified fabric visibility are paramount. By removing the embedded CPU and management subsystem, the QM9790 achieves lower power consumption and reduced complexity, making it particularly attractive for massive AI clusters and hyperscale data centers.

Core Technical Specifications

The QM9790 shares the same powerful NVIDIA Quantum-2 ASIC and port configuration as its managed counterpart, delivering identical raw performance characteristics:

Component Specification Switch ASIC NVIDIA Quantum-2 IC CPU None (externally managed) System Memory Not applicable Storage Not applicable Port Configuration 64 × 400Gb/s (32 OSFP connectors) Switching Capacity 25.6 Tbps Aggregate Throughput 51.2 Tb/s bidirectional Packet Processing 66.5 BPPS Form Factor 1U rack mount Dimensions 17.2″ × 1.7″ × 26″ (438mm × 43.6mm × 660mm) Weight 13.6 kg (1 PSU) / 14.8 kg (2 PSU) Power Efficiency Advantages

The elimination of management hardware translates directly into measurable power savings:

- Typical Power (with passive cables): 640W

- Maximum Power (with active cables): 1,610W

- Power Supply: Dual 1+1 redundant hot-swappable PSUs

- Input Voltage: 200-240VAC, 50/60Hz

The QM9790 demonstrates approximately 14% lower typical power consumption compared to the QM9700, which becomes significant when multiplied across hundreds or thousands of switches in hyperscale deployments.

NVIDIA Unified Fabric Manager Integration

The QM9790 is designed to work seamlessly with NVIDIA UFM, a comprehensive fabric management platform that provides:

- Centralized Provisioning: Deploy and configure switches across the entire fabric from a single management interface

- Real-Time Monitoring: Track performance metrics, congestion points, and network health across all switches simultaneously

- Predictive Analytics: Identify potential issues before they impact application performance

- Automated Troubleshooting: Rapidly diagnose and resolve network problems with intelligent root cause analysis

- Telemetry and Analytics: Collect detailed performance data for optimization and capacity planning

The UFM platform transforms network management from a switch-by-switch operation into a holistic, fabric-wide orchestration system. This approach proves invaluable in large AI training clusters where network performance directly impacts GPU utilization and training efficiency.

QM9700 vs QM9790: Detailed Comparison

Core Differences Table

Feature QM9700 QM9790 Management Type Internally managed with on-board subnet manager Externally managed via NVIDIA UFM Management CPU Intel Core i3 Coffee Lake None System Memory 8GB DDR4 None Operating System MLNX-OS Requires UFM Management Interfaces CLI, WebUI, SNMP, JSON UFM platform only Typical Power Consumption 747W 640W (14% lower) Maximum Power Consumption 1,720W 1,610W Ideal Deployment Size Up to 2,000 nodes Large-scale clusters (2,000+ nodes) Setup Complexity Simple out-of-the-box Requires UFM infrastructure Weight (2 PSU) 14.8 kg 14.8 kg Port Configuration 64 × 400Gb/s 64 × 400Gb/s Switching Performance 25.6 Tbps 25.6 Tbps Identical Performance Characteristics

Both switches share the same core networking capabilities:

- 64 ports of NDR 400Gb/s InfiniBand per port

- 32 OSFP (Octal Small Form-factor Pluggable) physical connectors

- Port-split capability to support 128 ports of 200Gb/s

- 51.2 Tb/s aggregate bidirectional throughput

- 66.5 billion packets per second processing capacity

- Support for passive copper, active copper, and active optical cables

- 1U standard rack-mount form factor

- Compatible with Fat Tree, Dragonfly+, SlimFly, and multi-dimensional Torus topologies

Management Approach Comparison

QM9700 Management Model:

The QM9700’s integrated management approach offers significant advantages for organizations that value simplicity and self-contained operation. The on-board subnet manager can automatically discover and configure network topology, assign local identifiers (LIDs) to nodes, and establish optimal routing paths without external intervention.

This self-sufficiency makes the QM9700 ideal for:

- Research laboratories with limited IT support staff

- Proof-of-concept AI cluster deployments

- Edge computing installations requiring autonomous operation

- Organizations transitioning from traditional networking to InfiniBand

- Deployments where network management traffic must remain isolated

QM9790 Management Model:

The QM9790’s dependence on UFM reflects a philosophy optimized for large-scale operations. By centralizing management intelligence in the UFM platform, organizations gain:

- Unified visibility across potentially thousands of switches

- Consistent policy enforcement throughout the entire fabric

- Advanced telemetry collection for performance optimization

- Simplified firmware updates across all switches simultaneously

- Integration with data center automation platforms

This approach excels in:

- Hyperscale AI training facilities

- Multi-tenant cloud environments

- Production HPC clusters requiring enterprise-grade management

- Organizations with established data center operations teams

- Deployments requiring detailed compliance reporting and auditing

HGX Servers for Clusters: Integration with Quantum-2 InfiniBand

Understanding NVIDIA HGX Architecture

The NVIDIA HGX platform represents the gold standard for AI and high-performance computing workloads, integrating multiple high-performance GPUs with ultra-fast interconnects in a standardized, scalable architecture. HGX systems combine NVIDIA GPUs, NVLink interconnects, and high-speed networking to deliver unprecedented computational performance for AI training, inference, and scientific computing applications.

HGX Platform Generations

Modern HGX platforms are available in multiple configurations:

- HGX H100: Based on NVIDIA Hopper architecture GPUs with 8× H100 SXM5 GPUs

- HGX H200: Enhanced Hopper variant with increased memory bandwidth

- HGX B200: Next-generation Blackwell architecture GPUs

- HGX B300: Latest Blackwell Ultra GPUs delivering 30× AI factory output improvement

Each HGX baseboard can be configured with four or eight GPUs interconnected via high-bandwidth NVLink, with the entire system connecting to the data center network through high-speed InfiniBand or Ethernet adapters.

NVIDIA HGX B200 (8-GPU) Platform

Rated 4.67 out of 5USD390,000Specification Category Parameter Value GPU Configuration GPU Type 8x NVIDIA B200 Tensor Core GPUs GPU Architecture Blackwell (208 billion transistors per GPU) GPU Form Factor SXM5 module with integrated cooling interface Manufacturing Process TSMC 4NP (4nm process technology) Memory Architecture Total GPU Memory 1,440GB HBM3e (180GB per GPU) Memory Type HBM3e (High Bandwidth Memory 3 Enhanced) Memory Interface per GPU 4,096-bit Memory Speed 8 Gbps per pin Per-GPU Memory Bandwidth 8 TB/s Aggregate Memory Bandwidth 64 TB/s (across all 8 GPUs) Quantum-2 Integration with HGX Clusters

The synergy between HGX servers and Quantum-2 InfiniBand switches creates the foundation for world-class AI infrastructure. This integration addresses the critical challenge in modern AI workloads: maintaining sufficient data throughput to keep GPUs fully utilized during distributed training and inference operations.

Key Integration Benefits:

Ultra-Low Latency Communication: Quantum-2 InfiniBand delivers sub-microsecond latency for GPU-to-GPU communication across servers, enabling efficient gradient synchronization during distributed AI training. This low latency ensures that GPUs spend maximum time computing rather than waiting for network communication.

Massive Bandwidth for Data Movement: With 400Gb/s per port, a single HGX server equipped with multiple InfiniBand adapters can achieve multi-terabit network connectivity. This bandwidth supports the enormous data movement requirements of large language models and computer vision workloads.

RDMA for Zero-Copy Transfers: InfiniBand’s Remote Direct Memory Access (RDMA) capabilities allow GPUs to directly access memory on remote servers without CPU involvement, dramatically reducing communication overhead and improving efficiency.

SHARP Technology for Collective Operations: The Scalable Hierarchical Aggregation and Reduction Protocol (SHARPv3) offloads collective communication operations (like AllReduce) to the network switches themselves, providing 32× higher AI acceleration compared to previous generations.

Network Topologies for HGX Clusters

Quantum-2 switches support multiple network topologies optimized for different cluster scales and communication patterns:

Fat Tree Topology: Traditional hierarchical structure providing full bisection bandwidth. QM9700/QM9790 switches enable two-level Fat Tree deployments that scale to thousands of HGX nodes while maintaining low latency.

Dragonfly+ Topology: Advanced topology connecting over a million nodes at 400Gb/s in just three hops. Ideal for massive-scale AI training facilities and national supercomputing centers.

Rail-Optimized Topology: Specialized configuration aligning with HGX multi-rail networking, where each GPU or GPU pair connects to dedicated network rails for maximum parallelism.

ConnectX-7 Adapter Integration

HGX servers typically deploy NVIDIA ConnectX-7 InfiniBand adapters that complement Quantum-2 switches:

- 400Gb/s throughput per adapter

- PCIe Gen5 host interface with up to 32 lanes

- 330-370 million messages per second RDMA message rate

- Hardware-accelerated encryption and compression

- GPUDirect RDMA for direct GPU-to-network data paths

Real-World HGX Cluster Configuration

A typical large-scale AI training cluster might include:

- 256 HGX H100 servers (2,048 GPUs total)

- 512 ConnectX-7 adapters (2 per HGX server for redundancy)

- 32 QM9790 switches in leaf layer

- 8 QM9790 switches in spine layer

- 2 UFM management servers for centralized control

- Dragonfly+ topology providing full fabric connectivity

This configuration delivers:

- 204.8 Tb/s total network bisection bandwidth

- Sub-2 microsecond average network latency

- Support for multi-tenant AI workloads with performance isolation

- Redundant paths for automatic failover and self-healing

AI Infrastructure: Building Next-Generation Data Centers

The Role of InfiniBand in Modern AI Infrastructure

As artificial intelligence workloads evolve from research experiments to production-scale deployments, network infrastructure has emerged as a critical performance bottleneck. Modern AI applications—from large language model training to real-time inference serving—demand network architectures that can match the computational power of thousands of high-performance GPUs.

InfiniBand technology, and specifically NVIDIA’s Quantum-2 platform, addresses these demands through several key capabilities:

Zero Packet Loss Design

Unlike Ethernet-based networks that rely on packet retransmission to handle congestion, InfiniBand implements credit-based flow control ensuring zero packet loss under normal operation. This design eliminates retransmission delays that can significantly impact AI training workloads where thousands of GPUs must synchronize frequently.

In-Network Computing with SHARPv3

Traditional network switches simply forward packets from source to destination. Quantum-2’s SHARPv3 technology transforms switches into active participants in AI computations by performing data reduction operations within the network fabric itself.

How SHARP Accelerates AI Training:

During distributed AI training, GPUs must regularly synchronize their gradient updates through collective communication operations (AllReduce, Reduce-Scatter, AllGather). Traditionally, these operations consume significant time as data flows through multiple network hops.

SHARPv3 accelerates this process by:

- Performing aggregation operations (sum, min, max) directly in switch ASICs

- Reducing network traffic by up to 32×

- Eliminating CPU involvement in reduction operations

- Supporting multi-tenant operation allowing multiple AI jobs to use SHARP simultaneously

The result: AI training jobs complete faster, GPUs remain utilized longer, and data center efficiency improves dramatically.

Adaptive Routing and Congestion Management

Quantum-2 switches implement sophisticated adaptive routing algorithms that dynamically select optimal paths through the network fabric based on real-time congestion information. This capability proves essential in large AI clusters where traffic patterns can change rapidly as different jobs start, stop, or enter communication-intensive phases.

Key Adaptive Routing Features:

- Sub-packet level routing decisions for maximum utilization

- Multiple path exploration to avoid congested links

- Quality of Service (QoS) guarantees for latency-sensitive workloads

- Enhanced Virtual Lane (VL) mapping for traffic isolation

Scaling to Hyperscale: Million-Node Connectivity

The Quantum-2 platform’s support for Dragonfly+ topology enables unprecedented scale. A single Dragonfly+ fabric can interconnect over one million nodes at full 400Gb/s bandwidth with just three network hops. This scalability makes Quantum-2 suitable for:

- National research laboratories building exascale supercomputers

- Cloud providers offering large-scale AI training services

- Enterprises deploying private AI infrastructure at hyperscale

- Research consortiums sharing computational resources

Building Multi-Tenant AI Cloud Infrastructure

Modern AI infrastructure must support multiple concurrent users and workloads while maintaining performance isolation and security. Quantum-2’s advanced virtualization and partitioning capabilities enable robust multi-tenant operation:

Virtual Fabrics: Partition physical network into isolated logical networks, each with dedicated bandwidth guarantees and independent routing domains.

Performance Isolation: Ensure one tenant’s communication patterns cannot impact another tenant’s network performance, critical for cloud AI service providers.

Secure Partitioning: Implement hardware-enforced isolation preventing unauthorized access between tenant domains.

Power Efficiency at Scale

While delivering unprecedented performance, Quantum-2 maintains industry-leading power efficiency. Considering total cost of ownership for large AI clusters:

QM9700 Power Profile:

- Typical: 747W per switch

- In a 1,000-switch deployment: 747 kW baseline power

- Annual energy cost (at $0.10/kWh): $654,000

QM9790 Power Profile:

- Typical: 640W per switch

- In a 1,000-switch deployment: 640 kW baseline power

- Annual energy cost (at $0.10/kWh): $560,000

- Annual savings: $94,000 compared to QM9700

These power differences, multiplied across thousands of switches, represent millions of dollars in operational savings over infrastructure lifetime.

Integration with AI Software Frameworks

Quantum-2 InfiniBand works seamlessly with leading AI development frameworks:

NVIDIA HPC-X: Comprehensive MPI and SHMEM software suite leveraging InfiniBand In-Network Computing for optimal application performance.

PyTorch Distributed: Native support for RDMA and collective communication acceleration, enabling efficient multi-GPU training.

TensorFlow: Integration with NVIDIA’s optimized communication libraries for distributed training.

Horovod: Uber’s distributed deep learning framework with built-in InfiniBand optimization.

DeepSpeed: Microsoft’s deep learning optimization library leveraging InfiniBand bandwidth for pipeline and model parallelism.

Future-Proofing AI Infrastructure

Quantum-2’s architecture provides investment protection through:

Backwards Compatibility: Support for HDR (200Gb/s) and previous-generation InfiniBand speeds, enabling incremental upgrades.

Forward Migration Path: Integration with upcoming Quantum-X800 (800Gb/s) and future technologies.

Software-Defined Capabilities: Regular firmware updates add new features without hardware replacement.

Technical Deep Dive: Advanced Features

Port-Split Technology

One of Quantum-2’s most innovative features is port-split capability. Each physical 400Gb/s OSFP port can be split into two 200Gb/s connections, effectively doubling the switch radix to 128 ports when operating at 200Gb/s speeds.

Use Cases for Port-Split:

- Top-of-Rack Consolidation: Connect 128 servers with 200Gb/s links using a single switch

- Gradual Migration: Support both 400Gb/s and 200Gb/s connections simultaneously during infrastructure transitions

- Cost Optimization: Reduce switch count in medium-density deployments

Congestion Control Mechanisms

Quantum-2 implements multiple congestion control technologies:

End-to-End Credit-Based Flow Control: Prevents buffer overflow by managing transmission rates based on receiver readiness.

Explicit Congestion Notification (ECN): Marks packets experiencing congestion, allowing endpoints to adjust transmission rates proactively.

Data Center Quantized Congestion Notification (DCQCN): Advanced algorithm balancing throughput and latency under varying load conditions.

Adaptive Routing: Dynamically redirects traffic away from congested paths in real-time.

Quality of Service (QoS) Implementation

Quantum-2 supports sophisticated QoS policies enabling:

- Traffic prioritization based on application requirements

- Bandwidth reservation guaranteeing minimum throughput for critical workloads

- Latency bounds for real-time applications

- Fair queuing preventing starvation under contention

Telemetry and Network Analytics

Both QM9700 and QM9790 provide comprehensive telemetry capabilities:

Real-Time Metrics:

- Per-port bandwidth utilization

- Packet loss and error statistics

- Buffer occupancy levels

- Congestion indicators

- Temperature and power consumption

Historical Analytics:

- Traffic pattern analysis

- Congestion hotspot identification

- Predictive maintenance indicators

- Capacity planning data

Security Features

Modern AI infrastructure demands robust security:

Hardware Root of Trust: Cryptographic verification of firmware and boot process integrity.

Secure Boot: Ensures only authenticated code executes on switch processors.

Encryption Support: Hardware-accelerated encryption for data in flight (when using ConnectX adapters).

Partition Enforcement: Hardware isolation between virtual fabrics and tenant domains.

Deployment Best Practices

Choosing Between QM9700 and QM9790

Select QM9700 When:

- Deploying clusters with up to 2,000 nodes

- Requiring autonomous operation without external management infrastructure

- Building proof-of-concept or research clusters

- Limited IT operations staff available

- Need for direct switch management via CLI or WebUI

- Budget constraints limit external management infrastructure investment

Select QM9790 When:

- Building large-scale production AI infrastructure (2,000+ nodes)

- Have existing UFM deployment or infrastructure

- Require centralized fabric-wide management and analytics

- Power efficiency is critical (14% lower power consumption)

- Multi-tenant cloud environment requiring advanced orchestration

- Compliance requires centralized audit logging and policy enforcement

Network Topology Selection

Fat Tree for General-Purpose AI Clusters:

Best for clusters where any GPU may need to communicate with any other GPU at full bandwidth. Provides predictable performance and simple troubleshooting.

Dragonfly+ for Hyperscale Deployments:

Optimal for massive clusters (10,000+ GPUs) where cost per port and total cable count become significant factors. Requires careful traffic engineering.

Rail-Optimized for Maximum Performance:

Align network rails with GPU topology for optimal performance. Typically deploy 4-8 InfiniBand rails per HGX server, each connected to independent switch domains.

Cable Selection Guidelines

Passive Copper (DAC):

- Most cost-effective option

- Limited reach (typically up to 3 meters for 400Gb/s)

- Lowest power consumption

- Ideal for top-of-rack connections

Active Copper (ACC):

- Extended reach up to 7 meters

- Moderate power consumption

- Good for mid-rack and adjacent-rack connections

Active Optical Cables (AOC):

- Longest reach (up to 100+ meters)

- Lightweight and flexible

- Higher power consumption

- Required for spine-leaf connections in large fabrics

Power and Cooling Planning

Power Requirements:

- Budget 750W per QM9700 or 650W per QM9790 for typical operation

- Plan for 1,720W per QM9700 or 1,610W per QM9790 maximum power with active cables

- Deploy dual power supplies for redundancy

- Connect each PSU to independent power distribution units (PDUs)

Cooling Considerations:

- Quantum-2 switches support both front-to-rear and rear-to-front airflow

- Match airflow direction to data center hot aisle / cold aisle configuration

- Maintain ambient temperature below 35°C (forward airflow) or 40°C (reverse airflow)

- Ensure unobstructed airflow with proper rack spacing

Initial Configuration Steps

For QM9700:

- Connect management port to out-of-band management network

- Access switch CLI via SSH or local console

- Configure hostname and management IP address

- Enable subnet manager functionality

- Configure switch

CopyDeep Researchبقیش؟بله، ادامه مقاله:

settings via MLNX-OS CLI 6. Verify fabric initialization and node discovery 7. Implement QoS policies and routing preferences

For QM9790:

- Deploy NVIDIA UFM management servers

- Connect QM9790 switches to UFM-managed network

- Discover switches in UFM console

- Apply fabric-wide configuration policies

- Enable telemetry collection

- Configure subnet managers on UFM servers

- Monitor fabric bring-up in UFM dashboard

Performance Benchmarking and Optimization

Network Performance Metrics

Key performance indicators for Quantum-2 deployments:

Bandwidth Metrics:

- Unidirectional bandwidth: Up to 400 Gb/s per port

- Bidirectional bandwidth: Up to 800 Gb/s aggregate per port

- Fabric bisection bandwidth: Topology dependent

- Effective bandwidth under load: Typically 95-98% of theoretical maximum

Latency Metrics:

- Switch forwarding latency: Sub-200 nanoseconds

- End-to-end latency (two-hop): ~1.5 microseconds

- MPI latency (small messages): ~1.2 microseconds

Packet Processing:

- Maximum packet rate: 66.5 billion packets per second

- Small packet performance: Full line rate at all packet sizes

- Burst absorption: Deep packet buffers minimize packet loss during microbursts

AI Training Performance Impact

Real-world performance improvements with Quantum-2 InfiniBand:

Large Language Model Training:

- GPT-3-scale models: Up to 2.6× faster training compared to previous-generation networking

- Gradient synchronization time: Reduced by 32× with SHARPv3 acceleration

- GPU utilization: Sustained 95%+ utilization during distributed training

Computer Vision Models:

- ImageNet training: Near-linear scaling up to 2,048 GPUs

- Real-time inference serving: Sub-millisecond latency for distributed inference

- Data loading bandwidth: Saturated network capacity with parallel data pipelines

Optimization Techniques

Maximize SHARPv3 Utilization:

- Enable SHARP for all collective communication operations

- Use MPI libraries with SHARP integration (HPC-X)

- Configure appropriate aggregation group sizes

- Monitor SHARP efficiency metrics in UFM

Adaptive Routing Tuning:

- Enable adaptive routing for general-purpose workloads

- Consider static routing for highly predictable traffic patterns

- Adjust routing aggressiveness based on congestion sensitivity

- Monitor routing efficiency through fabric telemetry

Quality of Service Configuration:

- Assign high priority to latency-sensitive control traffic

- Reserve bandwidth for storage and management traffic

- Implement fair queuing for multi-tenant environments

- Use separate virtual lanes for different traffic classes

Comparison with Alternative Networking Technologies

InfiniBand vs. Ethernet for AI Workloads

Characteristic Quantum-2 InfiniBand High-Speed Ethernet Maximum Speed 400 Gb/s per port 400 Gb/s per port Typical Latency 1-2 microseconds 3-5 microseconds Packet Loss Zero (credit-based flow control) Occasional (TCP retransmissions) RDMA Support Native RoCE (complexity overhead) In-Network Computing SHARPv3 acceleration Limited or none Ecosystem HPC/AI focused General purpose Management Complexity Moderate (specialized) Lower (familiar protocols) Collective Operations Hardware accelerated Software based Multi-tenant Isolation Native virtual fabrics VLAN/SDN based When InfiniBand Excels:

- Large-scale distributed AI training requiring frequent gradient synchronization

- HPC simulations with collective communication patterns

- Maximum performance and efficiency paramount

- Dedicated AI/HPC infrastructure

When Ethernet May Suffice:

- Smaller AI deployments (< 128 GPUs)

- Inference-focused workloads with limited inter-node communication

- Mixed-use data centers with diverse workload types

- Existing investment in Ethernet infrastructure

Quantum-2 vs. Previous InfiniBand Generations

HDR (200Gb/s) to NDR (400Gb/s) Improvements:

- 2× bandwidth per port

- 32× higher SHARP acceleration performance

- Lower latency through improved ASIC design

- Better power efficiency per gigabit transferred

Migration Path:

Quantum-2 switches maintain backward compatibility with HDR equipment, enabling gradual migration strategies where new NDR equipment interoperates with existing HDR infrastructure during transition periods.

Frequently Asked Questions (FAQ)

What is the main difference between QM9700 and QM9790 switches?

The primary difference lies in their management architecture. The QM9700 is an internally managed switch featuring an on-board Intel Core i3 CPU, 8GB RAM, and integrated subnet manager running NVIDIA MLNX-OS. This enables autonomous operation and direct management via CLI, WebUI, SNMP, or JSON APIs, making it ideal for clusters up to 2,000 nodes.

The QM9790 is externally managed and relies on NVIDIA Unified Fabric Manager (UFM) for configuration and control. By eliminating on-board management hardware, it consumes approximately 14% less power (640W vs 747W typical) and is optimized for large-scale deployments requiring centralized fabric management. Both switches deliver identical 64-port 400Gb/s performance.

How many servers can I connect to a single Quantum-2 switch?

A single QM9700 or QM9790 switch provides 64 ports of 400Gb/s connectivity through 32 OSFP connectors. In direct-attach configurations, you can connect up to 64 servers with single 400Gb/s connections each. Using port-split technology, the switch can support 128 servers with 200Gb/s connections each.

For HGX server deployments, where each server typically requires multiple network connections (often 2-8 ports per server for redundancy and bandwidth), a single switch might connect 8-32 HGX servers depending on the specific connectivity requirements of your cluster design.

What is SHARPv3 and how does it improve AI training performance?

SHARPv3 (Scalable Hierarchical Aggregation and Reduction Protocol version 3) is NVIDIA’s In-Network Computing technology that offloads collective communication operations from GPUs and CPUs to the network switches themselves. During distributed AI training, GPUs must frequently synchronize gradient updates through operations like AllReduce.

Traditionally, these operations consume significant time as data traverses multiple network hops and requires CPU processing. SHARPv3 performs aggregation operations (sum, min, max) directly within switch ASICs as data flows through the network. This approach reduces network traffic by up to 32×, eliminates CPU overhead, and dramatically accelerates training workloads. The third generation also introduces multi-tenant support, allowing multiple AI jobs to utilize SHARP acceleration simultaneously.

Can I mix QM9700 and QM9790 switches in the same fabric?

Yes, QM9700 and QM9790 switches can coexist within the same InfiniBand fabric since they share identical switching ASICs and support the same protocols. However, management becomes more complex in mixed deployments.

In a mixed environment, you would typically use UFM to manage the entire fabric, including both switch types. The QM9700’s on-board subnet manager would be disabled in favor of UFM’s centralized subnet management. This configuration works well but forfeits some of the QM9700’s autonomous management capabilities. For new deployments, selecting a single switch model (based on scale and management requirements) is generally recommended for operational simplicity.

What network topologies are supported by Quantum-2 switches?

Quantum-2 switches support all major network topologies used in HPC and AI infrastructure:

Fat Tree: Traditional hierarchical topology providing full bisection bandwidth. The QM9700/QM9790’s 64-port configuration enables efficient two-level Fat Tree designs for clusters with thousands of nodes.

Dragonfly+: Advanced topology offering exceptional scalability, connecting over one million nodes at 400Gb/s in just three hops. Optimal for hyperscale deployments.

SlimFly: Cost-optimized topology reducing cable count while maintaining high performance. Suitable for medium to large clusters.

Multi-dimensional Torus: Grid-like topology common in scientific computing. Supports various dimensionalities (2D, 3D, 4D) based on application requirements.

Rail-Optimized: Specialized configuration aligning with multi-rail HGX server designs, where each switch manages a dedicated communication rail.

The choice of topology depends on cluster size, budget constraints, application communication patterns, and performance requirements.

What cable types are compatible with Quantum-2 switches?

Quantum-2 switches utilize 32 OSFP (Octal Small Form-factor Pluggable) connectors, supporting multiple cable types:

Passive Direct Attach Copper (DAC): Most cost-effective option with zero power consumption. Available in lengths up to 3 meters for 400Gb/s operation. Ideal for top-of-rack connections and short distances.

Active Copper Cables (ACC): Extended reach up to 7 meters with moderate power consumption. Suitable for mid-rack and adjacent-rack connections.

Active Optical Cables (AOC): Longest reach spanning tens to hundreds of meters. Lightweight and flexible. Required for spine-leaf connections in large fabrics. Higher power consumption compared to copper alternatives.

Optical Transceivers + Fiber: Separate transceiver modules with standard fiber optic cables for maximum flexibility in cable routing and distance requirements.

All cable types support the full 400Gb/s bandwidth per port. Cable selection should balance cost, distance requirements, and power consumption constraints.

How does Quantum-2 InfiniBand integrate with NVIDIA HGX servers?

HGX servers integrate with Quantum-2 switches through NVIDIA ConnectX-7 InfiniBand network adapters. Each HGX server typically deploys multiple ConnectX-7 adapters (commonly 2-8 adapters per server) providing redundancy and maximizing aggregate bandwidth.

The integration leverages several key technologies:

GPUDirect RDMA: Enables direct memory transfers between GPUs on different servers without CPU involvement, dramatically reducing communication latency and improving efficiency.

RDMA over InfiniBand: Zero-copy data transfers eliminating memory copying overhead and reducing CPU utilization.

Multi-rail Support: HGX servers can utilize multiple independent InfiniBand connections simultaneously, each connecting to separate switch domains for maximum parallelism and fault tolerance.

SHARPv3 Acceleration: Offloads collective operations from GPUs to network fabric, freeing compute resources for actual AI workload processing.

This tight integration ensures that HGX’s powerful GPU computing resources remain fully utilized, with network communication rarely becoming a bottleneck even in the most demanding distributed AI training scenarios.

What is the expected lifespan and warranty of Quantum-2 switches?

NVIDIA Quantum-2 switches typically come with a 1-year standard warranty from the manufacturer. Extended warranty and support options are available through NVIDIA and authorized partners.

In terms of operational lifespan, InfiniBand switches typically serve reliably for 5-7 years in data center environments when properly maintained. However, technology refresh cycles in AI infrastructure often drive replacement sooner (3-5 years) as organizations upgrade to newer generations offering higher bandwidth and improved features.

Factors influencing lifespan include:

- Operating environment: Temperature, humidity, power quality

- Utilization levels: 24/7 operation at high bandwidth versus intermittent use

- Maintenance practices: Firmware updates, cleaning, monitoring

- Technology evolution: Newer GPU generations may benefit from upgraded networking

For mission-critical deployments, consider extended warranties, advanced hardware replacement services, and proactive monitoring through UFM to maximize uptime and longevity.

How much power do Quantum-2 switches consume?

Power consumption varies based on switch model and cable types:

QM9700 Power Consumption:

- Typical operation with passive copper cables: 747W

- Maximum power with active cables: 1,720W

- Power supplies: Dual 1+1 redundant (200-240VAC)

QM9790 Power Consumption:

- Typical operation with passive copper cables: 640W

- Maximum power with active cables: 1,610W

- Power supplies: Dual 1+1 redundant (200-240VAC)

The QM9790’s lower power consumption results from eliminating on-board management hardware (CPU, memory, storage). Both switches feature 80 GOLD+ and ENERGY STAR certification.

For deployment planning, budget for maximum power consumption per switch and consider cooling requirements (data center must remove generated heat). In large deployments, the 14% power difference between models can represent significant operational cost savings over the infrastructure’s lifetime.

What software is required to manage Quantum-2 switches?

For QM9700:

The switch runs NVIDIA MLNX-OS, a comprehensive InfiniBand switch operating system providing:

- Command-Line Interface (CLI) for administrative tasks

- Web-based User Interface (WebUI) for graphical management

- SNMP support for integration with enterprise monitoring systems

- JSON APIs for programmatic control and automation

- Integrated subnet manager for automatic fabric configuration

No additional software is required for basic operation, though integration with monitoring tools and automation frameworks enhances management capabilities.

For QM9790:

Requires NVIDIA Unified Fabric Manager (UFM) for all management functions. UFM is a comprehensive platform providing:

- Centralized switch discovery and configuration

- Fabric-wide topology visualization

- Real-time performance monitoring and telemetry

- Predictive analytics and troubleshooting

- Automated firmware updates

- Multi-fabric management from single console

UFM runs on dedicated management servers (physical or virtual) and includes both GUI and API interfaces for administration.

Both switches also benefit from NVIDIA HPC-X software suite for optimizing application performance over InfiniBand networks.

Conclusion: Selecting the Right Switch for Your Infrastructure

The choice between NVIDIA Quantum-2 QM9700 and QM9790 switches ultimately depends on your specific infrastructure requirements, scale, and operational philosophy. Both switches deliver industry-leading 400Gb/s InfiniBand performance with identical core specifications, but their management approaches serve different deployment scenarios.

Choose the QM9700 if you’re building a research cluster, proof-of-concept deployment, or medium-scale AI infrastructure (up to 2,000 nodes) where autonomous operation and direct switch management offer operational simplicity. The on-board management capabilities reduce deployment complexity and eliminate dependencies on external management infrastructure.

Select the QM9790 for large-scale production AI clusters, hyperscale data centers, or environments requiring centralized fabric management and advanced analytics. The power savings (14% lower typical consumption) compound significantly across hundreds of switches, while UFM integration provides enterprise-grade orchestration capabilities essential for multi-tenant cloud environments and massive AI training facilities.

Regardless of which model you choose, NVIDIA’s Quantum-2 platform represents the pinnacle of data center networking technology, delivering the bandwidth, latency, and advanced features required to unleash the full potential of modern AI infrastructure. The integration with HGX server platforms, SHARPv3 In-Network Computing acceleration, and support for hyperscale topologies ensures that your network infrastructure won’t become a bottleneck as AI workloads continue to grow in complexity and scale.

For organizations building the AI infrastructure of tomorrow, Quantum-2 InfiniBand switches provide the foundation for breakthrough performance, operational efficiency, and long-term scalability. To learn more about deploying these switches in your environment, visit the official NVIDIA Quantum-2 documentation or consult with authorized Dell Technologies partners for implementation guidance.

Last update at December 2025