-

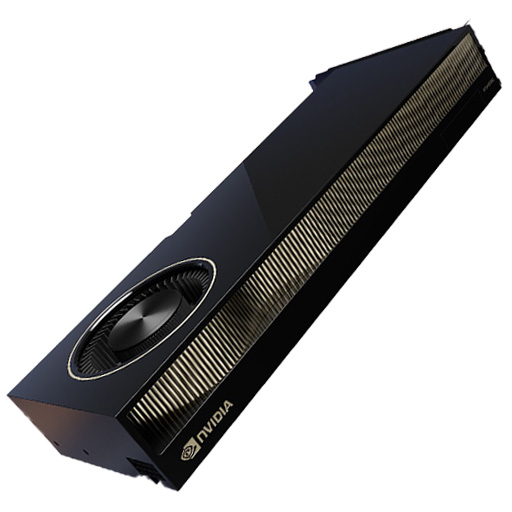

NVIDIA RTX 6000 Ada Generation Graphics Card USD9,000

-

Huawei OceanStor Dorado 6000 V6 – All-Flash NVMe Storage System for Mission-Critical Enterprise Applications USD16,000

-

HGX H200 Optimized X13 8U 8GPU Server

Rated 4.67 out of 5USD330,000 -

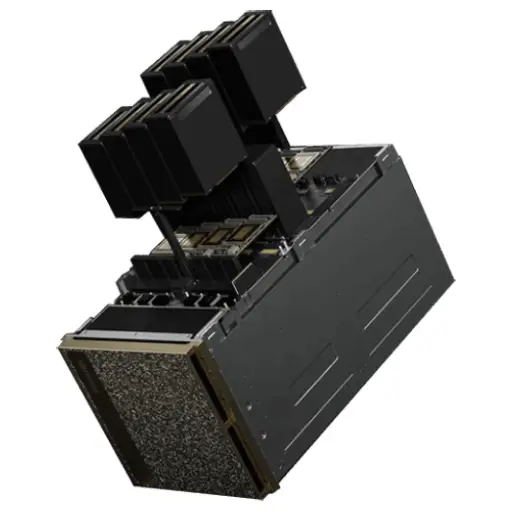

NVIDIA DGX B200 (AI Supercomputer – 8× Blackwell B200 SXM5 GPUs, 2× Intel Xeon 8570, 2TB DDR5, 34TB NVMe) USD600,000

-

Aetina MegaEdge AIP-FR68 (PCIe AI Workstation) USD15,000

-

AI Bridge LTE TS2-08: The Ultimate 8/16-Channel Edge AI Analytics Powerhouse with LTE & GPS USD4,500

Ethernet Switches for AI Data Centers: H3C S9827 vs S9855 Series

Author: ITCT Tech Editorial Unit

Reviewed By: Network Infrastructure Technical Lead

Last Updated: 2026-01-14

Reading Time: 20 minutes

References:

- H3C S9827 Series Data Center Switches Technical Documentation

- H3C S9855 Series Ethernet Switches Datasheet

- IEEE 802.3az Energy Efficient Ethernet Standards

- RoCEv2 and IBTA (InfiniBand Trade Association) Specifications

Quick Answer

The H3C S9827 and S9855 series are high-performance Ethernet switches purpose-built for AI data centers, leveraging RoCEv2 technology to deliver lossless, low-latency networking comparable to InfiniBand. The S9827 is the flagship hyperscale solution, offering 800G interfaces and a massive 102.4 Tbps switching capacity designed for the latest GPU clusters (like NVIDIA H100/B100). The S9855 is a cost-optimized alternative, providing robust 400G connectivity and 16 Tbps capacity, making it ideal for enterprise-grade AI clusters and mid-scale machine learning environments.

Key Decision Factors

Choose the S9827 Series if you are building a large-scale AI training facility requiring 800G throughput to eliminate bottlenecks in massive distributed training jobs. Choose the S9855 Series for standard enterprise AI deployments where 400G connectivity offers the best balance of price and performance, or for aggregation layers in tiered networks. Both series support critical AI features like PFC, ECN, and EVPN-VXLAN, but the S9827 requires higher power and cooling capacity. For smaller edge inference or management networks, the H3C S5570S-28S serves as a compatible access layer option.

The rapid expansion of artificial intelligence and machine learning workloads has fundamentally transformed data center networking requirements. Modern AI data centers demand ultra-high bandwidth, minimal latency, and lossless network architectures to support intensive computational tasks involving GPU clusters and distributed training systems. Selecting the right Ethernet switch infrastructure becomes critical for organizations building or upgrading AI-ready data centers.

Ethernet Switches

H3C, a leading provider of enterprise networking solutions, offers advanced switch series specifically engineered for AI data center environments. Among their flagship products, the H3C S9827 and H3C S9855 series stand out as purpose-built solutions designed to meet the demanding requirements of artificial intelligence workloads. These switches incorporate cutting-edge technologies like RoCEv2, support for 400G/800G interfaces, and comprehensive data center features that enable seamless deployment in high-performance computing environments.

This comprehensive guide examines the technical specifications, performance characteristics, and deployment considerations for H3C’s premier data center switches, helping network architects and IT decision-makers select the optimal infrastructure for AI-driven applications.

H3C S9827 Ethernet Switches

Overview and Architecture

The H3C S9827 series represents the next generation of high-density intelligent switches specifically developed for premium data centers and artificial intelligence computing environments. Built on advanced silicon photonics technology, this series delivers exceptional forwarding capacity while maintaining energy efficiency critical for large-scale deployments. The S9827 architecture leverages CLOS topology principles, providing non-blocking switching performance that eliminates bottlenecks in AI training workflows.

At the heart of the S9827 series lies support for up to 64 x 800G ports or 128 x 400G ports, delivering a staggering 102.4 Tbps total switching capacity. This massive bandwidth enables the switch to serve as the backbone infrastructure for GPU clusters running distributed deep learning models, where inter-node communication patterns generate enormous east-west traffic volumes. The switch’s ability to handle full-port insertion of Linear Pluggable Optics (LPO) transceiver modules, along with intermixing LPO and DSP transceivers, provides deployment flexibility while reducing power consumption compared to traditional optical modules.

H3C S9827 Series High-Density Intelligent Data Center Switches

Technical Specifications Ethernet Switches

| Feature | H3C S9827 Series Specifications |

|---|---|

| Port Density | 64 x 800G or 128 x 400G |

| Switching Capacity | Up to 102.4 Tbps |

| Forwarding Rate | Line-rate, non-blocking |

| Supported Protocols | MP-BGP EVPN, VXLAN, RoCEv2 |

| Port Splitting | 800G to 2x400G, 800G to 4x200G |

| Optical Module Support | LPO, DSP, intermixing supported |

| Redundancy | 2+1 or 2+2 power module redundancy |

| Management Interfaces | Serial console, USB, dual out-of-band |

| Airflow Options | Modular, front-to-back and back-to-front |

Key Features for AI Workloads

High-Density Port Configuration: The S9827 series provides industry-leading port density that directly addresses the connectivity requirements of modern AI servers equipped with multiple high-speed network adapters. Each 800G port can be split into multiple lower-speed interfaces, enabling flexible network topology designs such as leaf-spine architectures commonly deployed in AI data centers. This flexibility allows network designers to optimize the trade-off between port count and per-port bandwidth based on specific workload characteristics.

RoCEv2 and Lossless Networking: Remote Direct Memory Access over Converged Ethernet version 2 (RoCEv2) represents a critical technology for AI networking, enabling GPU-to-GPU communication with minimal CPU involvement. The S9827 series implements comprehensive RoCEv2 support through Priority-based Flow Control (PFC), Explicit Congestion Notification (ECN), and Enhanced Transmission Selection (ETS). These mechanisms work in concert to create a lossless Ethernet fabric that prevents packet drops during congestion events, which could otherwise cause training job failures or performance degradation in distributed machine learning frameworks like PyTorch and TensorFlow.

VXLAN and EVPN Integration: The switch supports MP-BGP EVPN as the control plane for VXLAN overlay networks, enabling massive scale-out of virtual networks without manual configuration overhead. This capability proves essential in multi-tenant AI cloud environments where workload isolation and network segmentation must be maintained without sacrificing performance. The EVPN implementation eliminates traffic flooding and reduces the full-mesh requirements between VXLAN Tunnel Endpoints (VTEPs), simplifying network operations.

Advanced Management and Visibility

The S9827 series incorporates comprehensive visibility features essential for managing complex AI networking environments. Inband Telemetry (INT) technology provides real-time collection of timestamp information, device ID, port statistics, and buffer utilization without the overhead of traditional polling-based monitoring. This continuous data stream enables proactive congestion management and rapid fault identification in AI training clusters where even brief network disruptions can impact job completion times.

Network administrators benefit from multiple traffic monitoring tools including sFlow, NetStream, and flexible mirroring options (SPAN/RSPAN/ERSPAN). These capabilities allow precise analysis of traffic patterns, identification of hotspots, and optimization of load balancing algorithms. The switch also supports Precision Time Protocol (PTP) for microsecond-level clock synchronization across distributed systems, critical for maintaining temporal consistency in coordinated AI training workloads.

SDN Integration: Built on next-generation ASIC architecture, the S9827 provides enhanced Software-Defined Networking capabilities through flexible OpenFlow tables and precise ACL matching. The switch seamlessly integrates with the H3C SeerEngine-DC Controller via standard protocols including OVSDB, Netconf, and SNMP, enabling automated network deployment and configuration management. This centralized control simplifies the orchestration of complex network policies required in dynamic AI environments where resource allocation and network paths must adapt to changing workload demands.

H3C S9855 with RoCEv2

Design Philosophy and Target Applications

The H3C S9855 series addresses a different segment of the AI data center market, focusing on organizations seeking a balance between high performance and deployment flexibility. While the S9827 targets hyperscale environments with its 800G capabilities, the S9855 series delivers robust 400G, 200G, and 100G connectivity options that prove ideal for mid-scale AI deployments, enterprise machine learning platforms, and distributed high-performance computing environments. The series maintains full compatibility with modern AI frameworks while providing cost-optimized infrastructure for organizations scaling their AI capabilities.

H3C S9855 Series High-Density RoCEv2 Ethernet Switch

Technical Specifications

| Feature | H3C S9855 Series Specifications |

|---|---|

| Port Options | 24 x 200G + 8 x 400G (S9855-24B8D) 48 x 100G + 8 x 400G (S9855-48CD8D) 40 x 200G (S9855-40B) 32 x 400G (S9855-32D) |

| Switching Capacity | Up to 16 Tbps |

| Forwarding Performance | High-density non-blocking |

| RoCEv2 Support | Full implementation with PFC, ECN, ETS |

| Power Supply | Redundant pluggable 1600W AC modules |

| Fan Configuration | Modular with airflow direction options |

| Layer Support | Layer 2/3/4 with comprehensive feature set |

| Use Cases | Core, aggregation, high-density server access |

RoCEv2 Implementation Excellence

The S9855 series distinguishes itself through comprehensive RoCEv2 networking capabilities designed specifically for RDMA applications in AI and high-performance computing scenarios. The implementation of Priority-based Flow Control ensures that high-priority AI training traffic receives preferential treatment during network congestion, preventing packet loss that would trigger costly TCP retransmissions or worse, cause training job failures. Explicit Congestion Notification provides early warning of congestion buildup, allowing endpoints to reduce transmission rates before buffers overflow.

Enhanced Transmission Selection creates multiple traffic classes with guaranteed bandwidth allocations, enabling network administrators to segregate AI training traffic from management and storage flows. This traffic isolation ensures that background operations never interfere with time-sensitive AI workloads. The combination of these technologies creates a genuinely lossless Ethernet network that rivals InfiniBand in performance while leveraging the cost advantages and operational familiarity of standard Ethernet infrastructure.

Deployment Versatility

Multiple Form Factors: The S9855 series offers four distinct models, each optimized for specific deployment scenarios within AI data centers:

- S9855-24B8D: Combines 24 ports of 200G with 8 ports of 400G, ideal for leaf switches in spine-leaf architectures supporting mixed GPU server generations

- S9855-48CD8D: Delivers 48 ports of 100G plus 8 uplink ports of 400G, perfect for high-density server aggregation layers

- S9855-40B: Provides 40 ports of 200G for uniform high-speed connectivity in homogeneous server environments

- S9855-32D: Offers 32 ports of pure 400G for maximum per-port bandwidth in GPU-dense clusters

This variety allows organizations to select the precise configuration matching their infrastructure requirements without overprovisioning or compromising performance. The ability to deploy different S9855 models within the same data center provides architectural flexibility as AI workload requirements evolve.

Data Center Feature Completeness

Beyond its RoCEv2 capabilities, the S9855 series implements the complete suite of modern data center switching features. Multichassis Link Aggregation (M-LAG) enables device-level redundancy by virtualizing two physical switches into a single logical entity, ensuring continuous operation even during maintenance windows or component failures. Independent upgrading capability allows administrators to patch software on DR member devices sequentially, minimizing disruption to production AI workloads.

The switch supports comprehensive VXLAN capabilities for network virtualization, essential in cloud-based AI platforms serving multiple tenants or projects with isolation requirements. Quality of Service (QoS) mechanisms provide fine-grained traffic prioritization, ensuring that interactive model inference workloads receive consistent low latency even when sharing infrastructure with batch training jobs. Advanced security features including 802.1X authentication, ACLs, and port security protect sensitive AI models and training data from unauthorized access.

H3C S5570S-28S

Enterprise-Grade Access Layer Solution

While the S9827 and S9855 series target core and aggregation layers in large AI data centers, the H3C S5570S-28S serves as an enterprise-grade access layer switch suitable for smaller AI deployments, research laboratories, and departmental machine learning clusters. This Layer 3 managed switch delivers intelligent features typically found only in higher-tier equipment, making it an economical choice for organizations beginning their AI journey or deploying edge inference systems.

Technical Overview

| Specification | H3C S5570S-28S Details |

|---|---|

| Port Configuration | 24 x 10/100/1000BASE-T + 4 x 1G/10G SFP+ |

| Switching Capacity | 128 Gbps |

| Packet Forwarding Rate | 96 Mpps |

| Flash Memory | 512M |

| SDRAM | 1G |

| Power Supply | Dual AC/DC support, redundant option |

| Layer 3 Features | Static routing, RIP, OSPF |

| Management | Web UI, CLI, SNMP v1/v2c/v3 |

Use Cases in AI Environments

The S5570S-28S excels in several AI-adjacent deployment scenarios. In research laboratories, it provides sufficient bandwidth to connect workstations, small GPU servers, and storage systems while offering Layer 3 routing capabilities that segment traffic between different research projects. The switch’s 10G uplink ports connect to higher-tier S9855 or S9827 switches, enabling researchers to submit jobs to centralized GPU clusters without bandwidth constraints.

For edge AI inference deployments, the S5570S-28S delivers reliable connectivity for edge servers running trained models in production. The switch’s QoS capabilities ensure that real-time inference requests receive priority over background telemetry and logging traffic. Advanced features like VLAN support and access control lists enable secure segmentation between public-facing inference endpoints and internal management networks.

Enterprise Integration Features

The S5570S-28S supports comprehensive enterprise networking protocols including LACP for link aggregation, Stackable architecture for simplified management of multiple units, and extensive VLAN capabilities for network segmentation. PoE support (in the S5570S-28S-HPWR-EI variant) enables power delivery to IP cameras and IoT sensors used in AI vision applications, consolidating power and data connectivity.

Security features include 802.1X port-based network access control, RADIUS and TACACS+ authentication support, and advanced ACLs for traffic filtering. These capabilities protect AI infrastructure from unauthorized access while maintaining the flexibility needed for legitimate research and production activities. The switch’s intelligent traffic management includes sFlow monitoring and port mirroring, providing visibility into network behavior essential for troubleshooting application performance issues.

All AI Network Solutions

Comprehensive Infrastructure Considerations

Building a complete AI network solution extends far beyond selecting individual switch models. Organizations must consider the entire infrastructure stack, including optical transceivers, cabling infrastructure, network management platforms, and integration with existing data center systems. The H3C portfolio provides end-to-end solutions addressing each of these considerations while maintaining interoperability with multi-vendor environments.

Optical Transceiver Ecosystem

H3C offers a comprehensive range of optical transceivers optimized for different distance and performance requirements:

Short-Range Options:

- QSFPDD-400G-SR8-MM850: 100m reach over OM4 multimode fiber, ideal for intra-rack connections

- QSFP56-200G-SR4-MM850: Cost-effective 200G connectivity for leaf-spine uplinks

- QSFP-100G-SR4-MM850: Legacy 100G support for mixed-generation deployments

Medium-Range Options:

- QSFPDD-400G-FR4-WDM1300: 2km single-mode reach for inter-building connections

- QSFP-100G-PSM4-SM1310: 500m parallel single-mode for campus distribution

Long-Range Options:

- QSFPDD-400G-LR8-WDM1300: 10km reach for data center interconnect (DCI)

- QSFP-40G-ER4-WDM1300: 40km legacy connectivity for metro networks

The availability of Direct Attach Copper (DAC) and Active Optical Cables (AOC) in various lengths provides cost-effective solutions for top-of-rack and adjacent-rack connections, reducing both capital expenses and power consumption compared to traditional optical transceivers.

Network Topology Design Patterns

Leaf-Spine Architecture: Modern AI data centers predominantly deploy leaf-spine topologies where S9827 or S9855 switches serve as spine switches providing non-blocking connectivity between leaf switches. Each GPU server connects to leaf switches using high-speed interfaces (100G, 200G, or 400G depending on server generation), while leaf-to-spine connections typically employ the maximum available bandwidth (400G or 800G). This architecture ensures that any-to-any communication between GPU nodes traverses exactly two hops (leaf-spine-leaf), providing consistent latency critical for collective communication operations in distributed training.

Fat-Tree Variations: Organizations requiring massive scale deploy multi-tier fat-tree architectures where multiple S9827 spine layers provide additional bisection bandwidth. This design accommodates tens of thousands of GPU nodes while maintaining non-blocking performance for popular all-reduce communication patterns. The H3C switches’ support for ECMP (Equal-Cost Multi-Path) routing ensures traffic distributes evenly across all available paths, preventing hotspots and maximizing throughput.

Storage Network Integration: AI workloads generate enormous data flows between GPU clusters and storage systems. Organizations typically deploy separate storage networks using 100G or 200G connections from S9855 switches to storage arrays, preventing training data transfers from competing with GPU-to-GPU communication. The switches’ comprehensive QoS capabilities enable traffic prioritization when converged networks combine compute and storage traffic on shared infrastructure.

Management and Orchestration Platforms

The H3C SeerEngine-DC Controller provides centralized management for H3C data center switches, enabling automated provisioning, configuration management, and policy enforcement across large-scale deployments. The controller’s integration with standard orchestration platforms allows AI job schedulers to dynamically configure network resources matching computational resource allocation, ensuring optimal performance for each training job.

Network telemetry integration with AI operations platforms enables closed-loop optimization where machine learning algorithms analyze network performance data and automatically adjust traffic engineering policies. This intelligent approach prevents manual configuration errors and adapts network behavior to changing workload characteristics without human intervention.

Best Practices for AI Network Deployment

Cabling Infrastructure: Organizations should invest in high-quality structured cabling systems supporting current and future bandwidth requirements. Single-mode fiber infrastructure provides upgrade flexibility, allowing 100G transceivers today with straightforward migration to 400G or 800G by replacing only the optical modules. Proper cable management prevents damage to delicate fiber connectors and simplifies troubleshooting when failures occur.

Power and Cooling: High-density switches like the S9827 generate substantial heat requiring adequate cooling infrastructure. Organizations should verify that rack power distribution and cooling capacity match switch requirements, considering redundancy needs. The S9827’s support for multiple airflow directions enables optimization based on hot-aisle/cold-aisle data center designs.

Monitoring and Alerting: Comprehensive monitoring systems should track switch performance metrics including port utilization, buffer congestion events, error counters, and temperature readings. Integration with incident management platforms ensures prompt response to network issues before they impact AI training jobs. Historical performance data enables capacity planning and identification of gradual degradation indicating impending component failures.

Security Hardening: AI networks contain valuable intellectual property in the form of proprietary models and training data. Organizations should implement defense-in-depth security including management network isolation, role-based access controls, encrypted management protocols (SSH, HTTPS), and regular security audits. Network segmentation prevents lateral movement by potential attackers, limiting the blast radius of any security compromise.

Comparative Analysis: S9827 vs S9855

Performance and Capacity

The fundamental distinction between the S9827 and S9855 series lies in their target bandwidth capabilities and switching capacity. The S9827’s support for 800G interfaces and 102.4 Tbps aggregate capacity positions it as the premier choice for hyperscale AI facilities operating the latest generation of GPU clusters, where individual servers may require 400G or 800G network connections for optimal performance. Organizations deploying NVIDIA A100, H100, or B100 GPU systems in large clusters will find the S9827’s capabilities essential for eliminating network bottlenecks.

Conversely, the S9855’s 400G maximum interface speed and 16 Tbps switching capacity target mid-scale deployments where GPU counts range from dozens to hundreds rather than thousands. This series handles previous-generation GPUs (NVIDIA V100, A100) effectively while providing headroom for future expansion. The cost differential between 400G and 800G infrastructure makes the S9855 the economically rational choice for organizations whose current AI workloads do not yet justify hyperscale network investment.

Deployment Scenarios

S9827 Ideal Use Cases:

- Hyperscale AI training facilities with 1000+ GPU clusters

- Cloud service providers offering AI/ML platforms at scale

- Research institutions operating national AI computing resources

- Organizations running foundation model training requiring massive parallelism

- Data centers with 800G-capable GPU servers and storage systems

S9855 Ideal Use Cases:

- Enterprise AI centers with 50-500 GPU nodes

- Departmental machine learning clusters in large organizations

- Research laboratories and university AI computing facilities

- Organizations upgrading from 100G/200G to 400G infrastructure

- Multi-tenant AI platforms requiring cost-optimized infrastructure

Total Cost of Ownership

Beyond initial hardware acquisition costs, organizations must consider the total cost of ownership including power consumption, cooling requirements, maintenance, and operational complexity. The S9827’s advanced technology delivers superior performance per watt compared to earlier generations, but its absolute power consumption and cooling requirements exceed the S9855 due to higher port densities and switching capacities.

The S9855’s 400G maximum port speed aligns with current optical transceiver pricing sweet spots, where 400G modules have achieved volume production and cost reduction. In contrast, 800G modules remain relatively expensive in 2024, though prices continue declining as deployment volumes increase. Organizations must balance immediate cost considerations against future-proofing benefits when selecting between these series.

Cost Optimization Strategies:

- Deploy S9827 switches only where 800G bandwidth is demonstrably required

- Use S9855 switches for aggregation layers where 400G provides sufficient capacity

- Leverage port breakout capabilities to maximize port count per switch investment

- Plan phased upgrades allowing infrastructure expansion matching GPU deployment schedules

- Consider leasing or consumption-based pricing models for rapidly evolving AI infrastructure

RoCEv2 Technology Deep Dive

Understanding RDMA over Converged Ethernet

Remote Direct Memory Access (RDMA) technology enables direct memory-to-memory data transfers between systems without CPU involvement, dramatically reducing latency and eliminating CPU overhead during network communication. Traditional TCP/IP networking requires the CPU to process every packet, copying data between application buffers and network buffers multiple times, introducing microseconds of latency and consuming valuable CPU cycles better spent on AI model training computations.

RoCEv2 brings RDMA capabilities to standard Ethernet networks, enabling organizations to achieve InfiniBand-like performance while leveraging familiar Ethernet infrastructure, operational procedures, and cost economics. This convergence proves particularly valuable in AI data centers where GPU-to-GPU communication patterns generate enormous network traffic during collective operations like all-reduce, all-gather, and broadcast that dominate distributed training workloads.

Technical Implementation

RoCEv2 builds upon three foundational technologies that transform Ethernet into a lossless fabric:

Priority-based Flow Control (PFC): PFC provides per-priority-level flow control, allowing switches to send pause frames for specific traffic classes when buffers approach capacity. This selective pausing prevents AI training traffic from being dropped during congestion events while allowing best-effort traffic to continue flowing. Proper PFC configuration requires careful tuning to prevent head-of-line blocking and ensure fairness across flows.

Explicit Congestion Notification (ECN): Rather than waiting for buffer overflow and packet loss, ECN marks packets traversing congested switches, signaling endpoints to reduce transmission rates proactively. This early congestion detection enables smoother traffic management and prevents the aggressive rate reduction triggered by actual packet loss. ECN proves particularly effective in AI workloads where bursty collective communication patterns can cause transient congestion.

Enhanced Transmission Selection (ETS): ETS provides bandwidth reservation and traffic shaping across multiple priority classes, ensuring that each traffic type receives its allocated share of link capacity. In AI environments, administrators typically configure ETS to guarantee bandwidth for training traffic while rate-limiting management and storage traffic that could otherwise interfere with time-sensitive GPU communication.

Performance Benefits for AI Workloads

The performance advantages of RoCEv2 in AI contexts manifest across multiple dimensions:

Latency Reduction: RDMA eliminates CPU involvement in data transfers, reducing end-to-end latency from tens of microseconds (TCP/IP) to single-digit microseconds. This latency reduction becomes critical in distributed training where synchronization operations occur thousands of times per second. Every microsecond saved in network communication translates to increased training throughput and reduced time-to-model convergence.

CPU Efficiency: By offloading network processing to the NIC hardware, RoCEv2 frees CPU cores for productive computation rather than packet processing. In GPU-accelerated systems, this efficiency gain may appear modest since GPUs handle most computation. However, the CPU must still coordinate GPU operations, prepare data, and manage the overall training pipeline. Reducing CPU network overhead ensures these critical tasks proceed without delay.

Throughput Maximization: Lossless networking ensures every transmitted packet reaches its destination without retransmission, enabling sustained line-rate performance even under heavy load. Traditional TCP networks suffer throughput collapse during congestion events as packet loss triggers exponential backoff algorithms. RoCEv2’s lossless operation maintains consistent high throughput regardless of network load.

Scalability: The combination of low latency and high throughput enables scaling distributed training to thousands of GPUs while maintaining efficiency. Training jobs that would be communication-bound on traditional Ethernet networks achieve near-linear scaling on properly configured RoCEv2 infrastructure, allowing organizations to train larger models faster by adding more compute resources.

Deployment Best Practices

Successfully deploying RoCEv2 networks requires attention to configuration details that differ from traditional Ethernet setups:

Network Topology: RoCEv2 performs best on non-blocking network fabrics where every path provides sufficient bandwidth for expected traffic patterns. Leaf-spine architectures with adequate oversubscription ratios (2:1 or better) prevent persistent congestion that would trigger constant flow control.

Buffer Sizing: Appropriate buffer allocation prevents packet loss during microbursts while avoiding excessive buffering that increases latency. The H3C S9827 and S9855 switches provide configurable buffer profiles optimized for AI workloads with different packet size distributions and burst characteristics.

QoS Configuration: Proper Quality of Service configuration ensures RoCEv2 traffic receives appropriate priority treatment without starving other traffic types. Organizations typically configure three to five priority classes separating AI training traffic, storage traffic, management traffic, and best-effort traffic.

End-to-End Design: RoCEv2 requires support across the entire network path including server NICs, switches, and receiving endpoints. Mixed environments where some paths support RoCEv2 while others do not can create performance anomalies and troubleshooting challenges. Organizations should deploy RoCEv2 systematically across entire failure domains.

Integration with AI Frameworks

PyTorch and TensorFlow Optimization

Modern AI frameworks include native support for RDMA communication backends, enabling distributed training to leverage RoCEv2 capabilities without application code modifications. PyTorch’s distributed data parallel (DDP) training mode automatically detects RDMA-capable networks and utilizes efficient communication primitives optimized for low-latency fabrics. TensorFlow’s distribution strategies similarly leverage RoCEv2 through the NCCL (NVIDIA Collective Communications Library) communication backend.

Organizations deploying H3C S9827 or S9855 switches should ensure their AI framework configurations specify appropriate network backends. For PyTorch, this involves setting the NCCL backend as the distributed communication backend. TensorFlow users should verify that NCCL detects and utilizes RDMA interfaces rather than falling back to TCP/IP communication. Proper configuration typically improves training throughput by 30-50% compared to TCP/IP networking for communication-intensive models.

Container Orchestration Considerations

Kubernetes-based AI platforms require additional configuration to expose RDMA devices to containerized training jobs. The RDMA CNI (Container Network Interface) plugin provides RDMA device passthrough to containers while maintaining network isolation between different jobs. Organizations should deploy the RDMA CNI plugin and configure resource limits ensuring each training job receives dedicated RDMA resources without oversubscription.

Network operators must coordinate with the Kubernetes cluster administrators to ensure consistent network configuration across compute nodes. The H3C switches’ support for standards-based EVPN control planes enables seamless integration with container networking solutions, automatically provisioning VXLAN overlays as new pods are scheduled without manual switch configuration.

Future-Proofing Considerations

Technology Evolution Roadmap

The networking industry continues advancing at a rapid pace, with 1.6 Tbps Ethernet standardization underway and early 3.2 Tbps development efforts beginning. Organizations investing in data center network infrastructure today should consider migration paths to future technologies. The H3C S9827’s 800G capabilities provide a forward-looking platform with expected relevance through 2028-2030, while the S9855’s 400G capabilities align with current mainstream deployment timelines through 2026-2027.

Backward Compatibility: Both switch series support extensive port breakout capabilities, allowing organizations to split high-speed ports into multiple lower-speed connections. This flexibility enables gradual server upgrades where new GPU systems with 800G NICs coexist with older systems using 100G or 200G connectivity. The switches’ ability to intermix different optical transceiver types prevents costly forklift upgrades, allowing organizations to maximize return on infrastructure investments.

Software Feature Updates: H3C provides regular software updates adding new features, security patches, and performance optimizations to deployed switches. Organizations should establish maintenance windows for non-disruptive software upgrades, taking advantage of M-LAG redundancy to minimize downtime. Subscription to H3C’s support services ensures access to critical updates and technical assistance when deployment challenges arise.

Sustainability and Energy Efficiency

Modern data center operators face increasing pressure to reduce power consumption and carbon footprint. The H3C switch series incorporates multiple energy-efficient technologies:

LPO Optical Modules: Linear Pluggable Optics eliminate the power-hungry DSP (Digital Signal Processor) components in traditional optical transceivers, reducing per-port power consumption by approximately 40-50%. The S9827’s full support for LPO modules enables significant power savings in large deployments with hundreds of optical connections.

Intelligent Cooling: The switches’ support for variable-speed fan control adjusts cooling intensity based on actual temperature measurements rather than running fans at maximum speed constantly. This dynamic cooling approach reduces overall power consumption while maintaining safe operating temperatures.

Energy-Efficient Ethernet: Support for IEEE 802.3az Energy Efficient Ethernet standards enables automatic link power reduction during idle periods, particularly relevant for management and control plane connections that experience variable traffic loads.

Organizations should quantify power and cooling costs when evaluating switch alternatives, as operational expenses over a typical 5-year lifecycle often exceed initial capital investment. The H3C switches’ efficiency features deliver measurable cost savings while supporting corporate sustainability initiatives.

Frequently Asked Questions

What is the main difference between H3C S9827 and S9855 switches?

The primary distinction lies in maximum port speed and aggregate switching capacity. The S9827 supports up to 800G ports with 102.4 Tbps total switching capacity, targeting hyperscale AI deployments with thousands of GPUs. The S9855 provides 400G maximum port speed with 16 Tbps capacity, suitable for mid-scale enterprise AI environments with dozens to hundreds of GPUs. Both series include comprehensive RoCEv2 support, but the S9827 represents the highest-performance tier in H3C’s data center portfolio.

Do these switches require special configuration for AI workloads?

Yes, optimal AI performance requires specific configurations including RoCEv2 enablement through PFC, ECN, and ETS settings. Network administrators should configure lossless traffic classes for AI training traffic, appropriate buffer allocations, and QoS policies separating training traffic from management and storage flows. H3C provides reference configurations for common AI deployment scenarios, and their technical support can assist with environment-specific tuning. Proper configuration typically improves distributed training performance by 30-50% compared to default settings.

Can I mix S9827 and S9855 switches in the same network?

Absolutely. Common deployment patterns use S9827 switches as spine switches providing 800G connectivity between network tiers, while S9855 switches serve as leaf switches connecting to GPU servers. This hierarchical approach optimizes cost by deploying expensive 800G infrastructure only where necessary for inter-switch connectivity while using more cost-effective 400G switches for server connections. The switches support standard protocols ensuring seamless interoperability within mixed deployments.

What optical transceivers are recommended for AI data centers?

For intra-rack and adjacent-rack connections within 10 meters, Direct Attach Copper (DAC) cables provide the most cost-effective solution. For connections up to 100 meters, multimode fiber with SR4 or SR8 optical transceivers offers optimal price-performance. Campus-wide data center interconnect requiring distances beyond 100 meters benefits from single-mode fiber with FR4 or LR8 transceivers. Organizations should prioritize LPO (Linear Pluggable Optics) modules where supported to reduce power consumption by 40-50% compared to traditional DSP-based transceivers.

How does RoCEv2 compare to InfiniBand for AI networking?

RoCEv2 delivers comparable latency and throughput performance to InfiniBand while leveraging standard Ethernet infrastructure, operational expertise, and broader vendor ecosystem. InfiniBand traditionally offered superior performance, but modern RoCEv2 implementations on switches like the H3C S9827 achieve single-digit microsecond latencies sufficient for distributed AI training. Organizations benefit from Ethernet’s routing capabilities for large-scale deployments, while InfiniBand networks typically require specialized gateway devices for inter-subnet communication. For most AI deployments, properly configured RoCEv2 on H3C switches provides equivalent practical performance at lower total cost of ownership.

What network topology is recommended for GPU clusters?

Modern AI deployments predominantly use leaf-spine or fat-tree topologies where S9827 or S9855 switches serve as spine switches and leaf switches connect directly to GPU servers. This architecture ensures consistent two-hop latency between any pair of GPUs, critical for collective communication operations in distributed training. Organizations should target 2:1 oversubscription ratios or better, meaning leaf-to-spine bandwidth should be at least half the sum of server-facing bandwidth. For clusters exceeding 1000 GPUs, multi-tier fat-tree architectures provide additional bisection bandwidth while maintaining non-blocking performance.

How often should I upgrade data center network infrastructure?

Network infrastructure typically follows 3-5 year upgrade cycles aligned with broader data center refresh schedules. However, AI workload growth may necessitate more frequent upgrades as GPU densities and networking requirements increase. Organizations should monitor network utilization metrics and identify bottlenecks indicating capacity exhaustion. The H3C switches’ support for port breakout and modular components enables incremental upgrades, allowing organizations to extend infrastructure lifespan by adding higher-speed optical modules without replacing entire switches. Strategic planning should consider 3-year requirements when specifying new infrastructure to ensure adequate headroom for growth.

What management tools are available for H3C AI network infrastructure?

H3C provides the SeerEngine-DC Controller for centralized management of data center switches, enabling automated provisioning, configuration management, and policy enforcement. The controller integrates with standard orchestration platforms including Kubernetes and OpenStack, allowing AI job schedulers to dynamically configure network resources matching computational allocations. For organizations requiring multi-vendor management, the switches support industry-standard protocols including SNMP, Netconf, and gRPC, enabling integration with third-party network management systems like Cisco DNA Center, VMware vRealize, or open-source alternatives.

Conclusion

Selecting appropriate Ethernet switching infrastructure represents a critical decision for organizations building AI data centers. The H3C S9827 and S9855 series provide enterprise-grade solutions addressing different segments of the AI networking market, from hyperscale cloud providers operating thousands of GPUs to mid-market enterprises deploying departmental machine learning clusters.

The S9827’s groundbreaking 800G capabilities and 102.4 Tbps switching capacity position it as the premier choice for organizations operating at the forefront of AI technology, training foundation models and large language models requiring massive computational and networking resources. Meanwhile, the S9855 series delivers robust 400G performance ideal for mainstream AI deployments where cost optimization remains important alongside performance requirements.

Both series incorporate comprehensive RoCEv2 support enabling RDMA communication patterns critical for distributed training efficiency. When properly configured, these switches deliver lossless networking performance approaching InfiniBand while leveraging familiar Ethernet infrastructure and operational procedures. Organizations benefit from H3C’s extensive data center networking expertise, comprehensive product portfolio spanning access to core layers, and commitment to standards-based interoperability.

As AI workloads continue growing in scale and complexity, network infrastructure must evolve to prevent bottlenecks that limit overall system performance. Investing in capable switching infrastructure like the H3C S9827 and S9855 series ensures organizations can scale their AI capabilities without infrastructure constraints limiting research velocity or business outcomes.

For organizations seeking guidance on selecting appropriate network infrastructure for their specific AI requirements, H3C provides technical consultation services and reference architectures documenting proven deployment patterns across diverse use cases and scales.

“When architecting for distributed model training, bandwidth is often the bottleneck. In scenarios involving thousands of GPUs, the S9827’s non-blocking 800G capacity is essential to maintain linearity in training speeds, whereas 400G solutions like the S9855 are generally sufficient for clusters under 500 nodes.” — Senior Network Architect

“The implementation of RoCEv2 in modern Ethernet switches has closed the performance gap with InfiniBand. By utilizing the S9855’s PFC and ECN features, we can achieve the lossless fabric required for stable AI workloads without the operational overhead of managing a separate specialized network protocol.” — Lead Infrastructure Engineer

“Cost efficiency in AI data centers isn’t just about hardware price; it’s about power per bit transferred. While the S9827 has a higher absolute power draw, its support for Linear Pluggable Optics (LPO) can reduce per-port power consumption by nearly 40% compared to traditional DSP-based optics, significantly lowering long-term OpEx.” — Data Center Operations Director

Last update at December 2025